Google has significantly amplified its commitment to open-source artificial intelligence with the release of Gemma 4, a suite of four open-weight models. These models, built upon the advanced research underpinning Gemini 3 and released under the permissive Apache 2.0 license, represent a strategic move to bolster the U.S. open-source AI ecosystem. This release arrives at a critical juncture, addressing a perceived dominance of models originating from China in recent years.

Key Takeaways

- Google has launched Gemma 4, a family of four open-weight AI models available under the Apache 2.0 license.

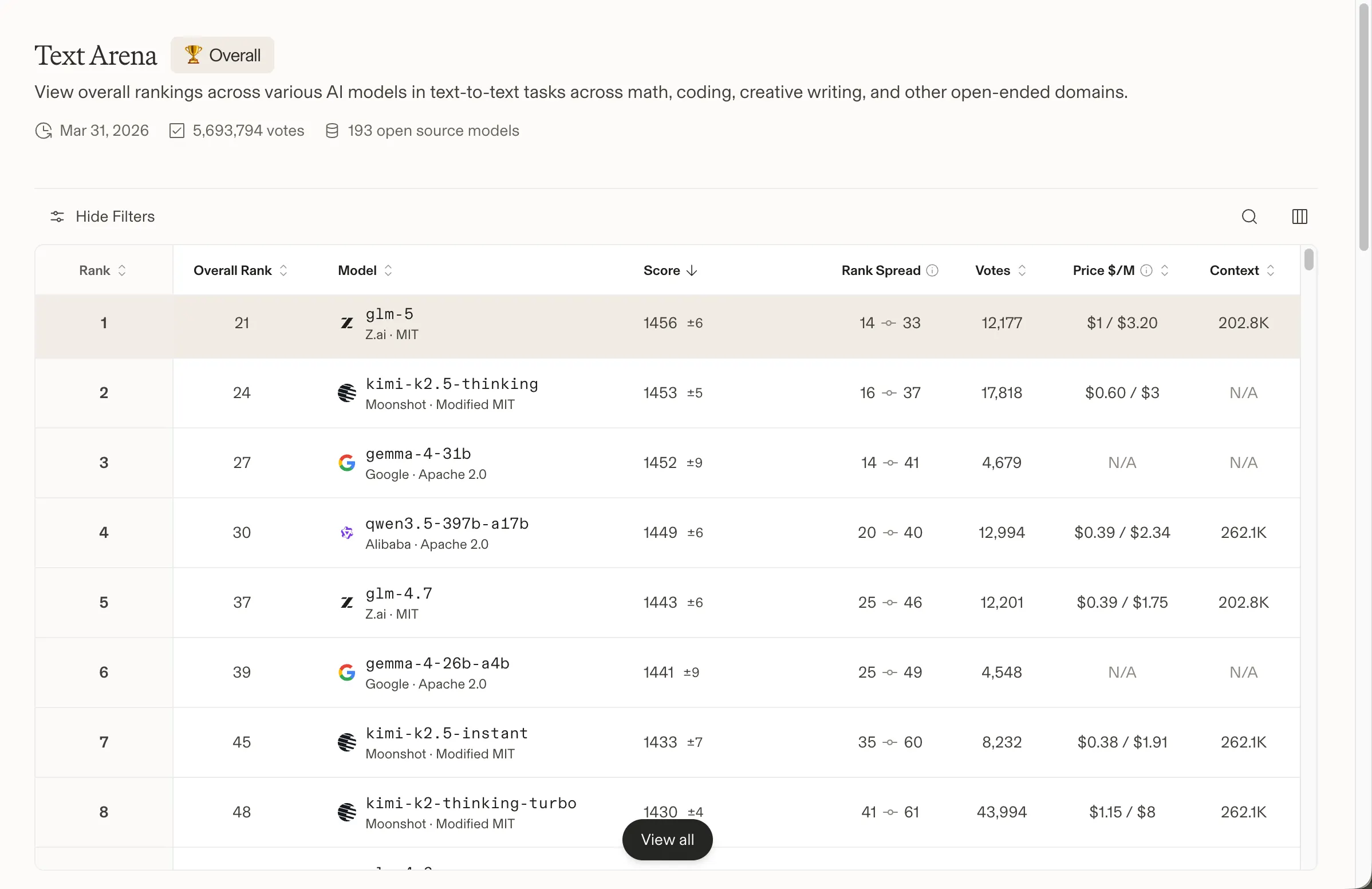

- The Gemma 4 lineup spans devices from mobile phones to high-performance data centers, with the 31B model achieving the third-highest global ranking for open models.

- This release provides a significant boost to U.S.-based open-source AI efforts, positioning Gemma 4 as a strong competitor against leading Chinese models like DeepSeek and Qwen.

The widespread adoption of previous Gemma generations, evidenced by over 400 million downloads and 100,000 community-developed variants, highlights the developer appetite for accessible AI tools. Gemma 4, with its enhanced capabilities and open licensing, aims to capitalize on this momentum.

We just released Gemma 4 — our most intelligent open models to date.

Built from the same world-class research as Gemini 3, Gemma 4 brings breakthrough intelligence directly to your own hardware for advanced reasoning and agentic workflows.

Released under a commercially… pic.twitter.com/W6Tvj9CuHW

— Google (@Google) April 2, 2026

For an extended period, the open-source AI landscape has seen considerable influence from models developed in China, with platforms such as DeepSeek, Minimax, GLM, and Qwen frequently topping leaderboards. This trend saw Chinese open models grow from a marginal share to a substantial portion of global usage by late 2025, with some even surpassing established models like Meta’s Llama in self-hosted deployments. Meta’s Llama, once a preferred choice for local execution, faced challenges due to its licensing terms and performance relative to newer competitors.

While other initiatives, like the Allen Institute’s OLMo, attempted to fill the void, they did not achieve widespread traction. OpenAI’s gpt-oss models offered a temporary uplift but were not positioned as direct competitors in the frontier model space. The recent emergence of Arcee AI’s Trinity, a substantial open model from a U.S. startup, signaled a potential resurgence for American contributions. Gemma 4, backed by the extensive resources of Google DeepMind, enters this competitive arena as a prominent U.S.-developed contender.

Google emphasizes that Gemma 4 leverages the same “world-class research and technology” as Gemini 3. The family comprises four distinct models tailored for different applications: E2B and E4B are optimized for mobile and edge devices, offering offline functionality with low latency and substantial context windows. The 26B Mixture of Experts (MoE) model prioritizes speed, while the 31B Dense model is engineered for maximum performance and quality. Both the 31B Dense and 26B MoE models currently rank highly on AI model evaluation platforms, with Google asserting their superiority over models significantly larger in parameter count, based on current benchmark data.

Initial testing of Gemma 4 indicates strong capabilities, particularly in code generation, where it produced functional code without requiring immediate debugging. While its creative writing output is described as serviceable, its performance on tasks demanding reasoning is noted as potentially over-engineered for simpler prompts. This suggests a need for refined prompt engineering to optimize results for specific use cases.

The four Gemma 4 variants are designed for broad compatibility. The E2B and E4B models are engineered for edge deployment, supporting native audio input and a 128K context window for offline processing. The larger 26B and 31B models cater to workstations and cloud environments, extending context to 256K and incorporating native function-calling for autonomous agent development. All models possess native image and video processing capabilities. The full-precision weights of the larger models can be accommodated by an 80GB NVIDIA H100 GPU, with quantized versions accessible on consumer hardware.

The adoption of the Apache 2.0 license is a critical factor, removing the commercial use restrictions associated with previous Gemma versions. This open licensing empowers developers to freely modify, distribute, and monetize applications built on Gemma 4. Industry figures, including Hugging Face’s co-founder Clement Delangue, have lauded this move, emphasizing the growing significance of local AI development. Google DeepMind CEO Demis Hassabis has boldly stated that Gemma 4 represents “the best open models in the world for their respective sizes.”

Excited to launch Gemma 4: the best open models in the world for their respective sizes. Available in 4 sizes that can be fine-tuned for your specific task: 31B dense for great raw performance, 26B MoE for low latency, and effective 2B & 4B for edge device use – happy building! pic.twitter.com/Sjbe3ph8xr

— Demis Hassabis (@demishassabis) April 2, 2026

While proprietary models from leading AI labs still hold the edge in certain high-demand benchmarks, Gemma 4 significantly strengthens the open-weight model ecosystem. Its availability on platforms like Google AI Studio, Hugging Face, Kaggle, and Ollama ensures broad accessibility for developers seeking to build and deploy advanced AI solutions on their own infrastructure.

Long-Term Technological Impact

The release of Gemma 4 under a truly permissive license like Apache 2.0 has profound implications for the future of AI development and decentralization. By removing licensing friction, Google is actively fostering an environment where innovation can flourish rapidly, driven by a global community of developers. This move can accelerate the integration of advanced AI capabilities into a wider array of applications, from consumer devices to complex enterprise solutions, without the constraints of proprietary ecosystems. Furthermore, it supports the growing trend of on-device AI and edge computing, enabling more private, secure, and efficient AI processing. This broad accessibility and the emphasis on open standards could democratize AI development, leading to more diverse and specialized AI tools and a more robust, competitive, and innovative global AI landscape. The potential for rapid iteration and adaptation of these models by the open-source community could lead to unforeseen advancements in AI research and application, pushing the boundaries of what is currently considered possible in fields like blockchain integration, Web3 infrastructure, and AI-driven automation.

Learn more at : decrypt.co