The market for used and refurbished data center GPUs has solidified into a crucial element of AI infrastructure economics. This ecosystem provides a vital channel for capital recovery for hyperscalers rotating through hardware generations and lowers the capital expenditure barrier for nascent cloud operators building GPU capacity. It also generates important pricing signals that influence industry-wide perspectives on GPU investment, depreciation, and hardware lifecycle management. While this business-to-business (B2B) secondary market is substantial, dedicated analysis from an enterprise or infrastructure standpoint remains scarce, with most coverage focused on consumer-grade graphics processing units (GPUs). This analysis aims to map the B2B secondary GPU market, examining its participants, pricing structures, and the implications of GPU longevity for AI infrastructure economics. Key Takeaways

The market for used and refurbished data center GPUs has solidified into a crucial element of AI infrastructure economics. This ecosystem provides a vital channel for capital recovery for hyperscalers rotating through hardware generations and lowers the capital expenditure barrier for nascent cloud operators building GPU capacity. It also generates important pricing signals that influence industry-wide perspectives on GPU investment, depreciation, and hardware lifecycle management. While this business-to-business (B2B) secondary market is substantial, dedicated analysis from an enterprise or infrastructure standpoint remains scarce, with most coverage focused on consumer-grade graphics processing units (GPUs). This analysis aims to map the B2B secondary GPU market, examining its participants, pricing structures, and the implications of GPU longevity for AI infrastructure economics. Key Takeaways

- The used GPU market operates as a mature B2B ecosystem, featuring certified IT Asset Disposition (ITAD) vendors, enterprise resellers, and formalized buy/sell processes, distinct from consumer-level clearance.

- Pricing for used NVIDIA A100 GPUs typically ranges from approximately $7,800 to $18,900, varying by SKU and condition, with consistent turnover through both formal and informal channels. Used H100 pricing has shown significant volatility, fluctuating from highs of $50,000 during periods of scarcity to substantial discounts as supply increased.

- The debate surrounding GPU depreciation is active and impactful. While Amazon reduced server useful lives in early 2025, Meta extended theirs in the same quarter. Real-world deployments suggest GPUs can maintain economic value for 5–7+ years, influencing investor perspectives and accounting practices.

- For numerous inference and fine-tuning tasks, the critical metric is cost per completed task rather than cost per hour. In this context, used A100 GPUs can offer superior economics compared to newer hardware, even at a higher purchase price.

- The secondary market is projected to expand as annual hyperscaler GPU expenditures exceed $300 billion and NVIDIA’s accelerated hardware release cycles (Hopper → Blackwell → Rubin → Rubin Ultra) shorten fleet rotation timelines.

What Is the Secondary GPU Market?

The secondary GPU market encompasses the B2B ecosystem for the acquisition and resale of used and refurbished data center GPUs, including models such as A100s, H100s, and H200s, along with their associated server systems. This market is fundamentally different from the consumer market for gaming GPUs, characterized by distinct participants, price points, and transaction methodologies.

The supply side is largely dominated by hyperscalers upgrading their GPU fleets to newer generations. When entities like AWS, Google, or Azure deploy hardware from newer architectural families, the preceding generation of GPUs is channeled into the secondary market via ITAD vendors or direct resale agreements. Enterprises undergoing consolidation or project cessation also contribute to supply, as do Bitcoin miners who may have previously deployed GPUs for rendering or AI-specific computations and are now reassessing their hardware strategy.

Demand is primarily driven by neocloud operators focused on establishing GPU capacity at lower price points than hyperscalers. A significant portion of AI workloads are executed on neocloud infrastructure, with many operators leveraging a combination of new and used hardware. Budget-constrained research institutions, smaller enterprises, and entities in regions with restricted access to new GPU supplies also constitute a notable customer base. The common factor among these buyers is the requirement for GPU compute power, coupled with constraints related to affordability, lead times, or the necessity for only the latest generation hardware.

Intermediary firms are integral to the functioning of this market. ITAD specialists such as Procurri and Bitpro focus on the procurement, testing, and resale of used GPUs sourced from hyperscalers and large enterprises. Enterprise resellers, including Alta Technologies with its extensive experience in the custom server industry, engage in the buying and selling of used NVIDIA DGX servers and individual GPUs, performing multi-point inspections and offering warranties on refurbished units. Companies like SellGPU and exIT Technologies operate on both sides of the market, adhering to R2v3 certification standards and ensuring compliant data sanitization.

Economically, the distinction between “used” and “refurbished” is significant. “Used” typically implies an as-is condition from a previous deployment, placing greater risk on the buyer. “Refurbished” indicates that the hardware has undergone testing, certification, and potentially includes a warranty. This difference is reflected in pricing, with refurbished H100s frequently commanding 15–25% higher prices than their used counterparts, creating arbitrage opportunities for resellers capable of testing and certifying hardware at scale.

This market operates symbiotically, serving as an essential off-take channel for hyperscalers to recover capital from older hardware while providing neoclouds with access to discounted GPUs. This relationship is mutually beneficial, and as noted by an industry practitioner at the 2026 PTC Conference, “ITAD relationships will become more important as hardware supply tightens.”

Current Used GPU Pricing—A100, H100, and the Generation Gap

Precise pricing data for used GPUs is often challenging to obtain. Publicly available figures typically represent a composite of reseller asking prices, available refurbished inventory, and marketplace listings, with private transactions potentially clearing at materially different rates. With this caveat, the current market landscape as of early 2026 is detailed below.

NVIDIA A100—The Most Liquid Used GPU

The NVIDIA A100, initially released in May 2020, is now approximately six years old and remains one of the most actively traded enterprise GPUs on the secondary market. Available commercial listings range from around $7,800 for refurbished A100 40GB units to approximately $18,900 for an A100 80GB PCIe card. Private or distressed sales may yield lower figures. A used Gigabyte 8×A100 HGX baseboard was listed on eBay for approximately $14,500, indicating that partial system components and pull-tested inventory circulate through informal channels alongside formal ITAD pipelines.

Rather than characterizing the market as “flooded,” it is more accurate to describe it as exhibiting sustained bidirectional activity. Dedicated resellers, ITAD firms, and marketplace listings all indicate active turnover in A100 inventory. Purchase prices for used A100s are anticipated to decline by an additional 10–15% through 2026 as more enterprises transition to Blackwell-generation hardware.

Comparative cloud rental rates for A100 GPUs vary between approximately $1.29–$3.43 per hour, depending on the provider. This variability makes the breakeven calculation for purchasing versus renting heavily contingent on utilization rates.

The A100 40GB variant is optimized for LoRA/QLoRA fine-tuning of models with 7B–13B parameters and for inference on quantized models. The 80GB version extends capabilities to models up to 65B parameters, supports simultaneous multi-model inference, and enables larger batch sizes.

NVIDIA H100—Volatile and Regime-Dependent

The H100, launched in March 2023, has experienced significant secondary market pricing volatility. Used and refurbished units reached highs of up to $50,000 per GPU during periods of scarcity in mid-2024, subsequently experiencing sharp price declines as supply increased and buyer leverage returned. Retail prices for the H100 remained relatively stable between $25,000 and $40,000 throughout this period, masking the underlying turbulence in the secondary market.

Cloud rental pricing followed a similar dynamic trajectory, decreasing from approximately $7–$10 per hour at its 2023 launch to around $2–$4 per hour by late 2025, with some providers offering spot rates below $2 per hour. Silicon Data recorded a brief rebound in early 2026, indicating that the trend has been volatile rather than consistently downward. Reports suggest AWS reduced H100 pricing by approximately 44% in June 2025, which contributed to a broader market adjustment.

At the server level, the typical B2B transaction unit, a used 8-GPU H100 server trades in the approximate range of $150,000–$180,000. In comparison, a new B300 server is priced at approximately $500,000. Refurbished GPU servers from vendors such as Alta Technologies and NewServerLife offer savings of 40–70% over new original equipment manufacturer (OEM) systems, typically including warranties and certified components.

The Cost-Per-Task Argument

For many AI workloads, including inference, fine-tuning, and batch processing, the NVIDIA A100 can achieve comparable results to the H100 at approximately half the cost. The critical performance metric in these scenarios is the cost per completed task, rather than the cost per hour. An A100 operating at $1.49/hr may deliver superior overall economics compared to an H100 at $2.99/hr for inference tasks where the H100’s performance advantage does not proportionally decrease task completion time. Buyers who prioritize cost per hour exclusively may incur unnecessary expenditure for capabilities their specific workloads do not require.

A Note on Component Costs and Tariffs

Used GPU servers may not always be immediately deployable without additional component investment. Memory and storage upgrades are often necessary, and these component markets have experienced increased pricing pressure. The cost of DDR4/DDR5 and server SSDs saw a notable increase from late 2025 into early 2026 due to strained supply driven by AI demand, with some projections indicating potential doubling of server memory prices by 2026. High Bandwidth Memory (HBM) pricing and availability also tightened as production shifted towards AI-grade memory. These component cost increases can diminish the cost-saving advantage of acquiring used hardware if the server requires substantial upgrades.

Furthermore, tariff policies enacted in 2025 introduced uncertainty and upward pricing pressure on certain GPU and server supply chains, with external estimates often placing the impact in the range of 20–40% for affected categories.

The GPU Depreciation Debate—How Long Do Data Center GPUs Actually Last?

The economic lifespan of GPUs is a pivotal and unresolved question in AI infrastructure economics. The determination of this lifespan directly impacts the value proposition of the secondary market, the accuracy of hyperscaler financial reporting, and the viability of the “value cascade” model upon which the used GPU thesis relies. Currently, there is no industry consensus on this matter.

The Accounting Backdrop

Between 2020 and 2024, hyperscalers transitioned their server depreciation schedules from 3–4 years to 5–6 years. AWS initiated this trend in January 2020, extending its schedule from 3 to 4 years. By 2023, all three major hyperscalers—AWS, Google, and Azure—had standardized on 6-year depreciation schedules.

However, in early 2025, this uniformity fractured. Amazon announced a reduction in the useful life for a subset of servers from 6 to 5 years, citing “the increased pace of technology development, particularly in the area of artificial intelligence and machine learning,” which resulted in a $677 million reduction in net income for the first nine months of 2025. Concurrently, Meta extended its schedule to 5.5 years in the same quarter, recording a $2.9 billion reduction in depreciation. This divergence highlights the use of depreciation as an active earnings management tool rather than solely an engineering assessment of hardware lifespan.

The Burry Argument

Investor Michael Burry has articulated a particularly short-lifecycle thesis, asserting that hyperscalers are overstating earnings by depreciating hardware over 5–6 years when NVIDIA’s chip release cycle implies an economic lifespan of 2–3 years. He estimates approximately $176 billion in understated depreciation across the industry between 2026 and 2028, a position he has supported with put options on NVIDIA and Palantir.

The underlying logic is that with annual releases of new GPU generations (Hopper in 2022, Blackwell in 2024, Rubin in 2026, Rubin Ultra in 2027), each offering significant performance and efficiency improvements—for instance, Blackwell providing up to 25 times better energy efficiency than Hopper for specific inference workloads—older hardware rapidly becomes economically obsolete at a pace exceeding a 5–6 year depreciation schedule.

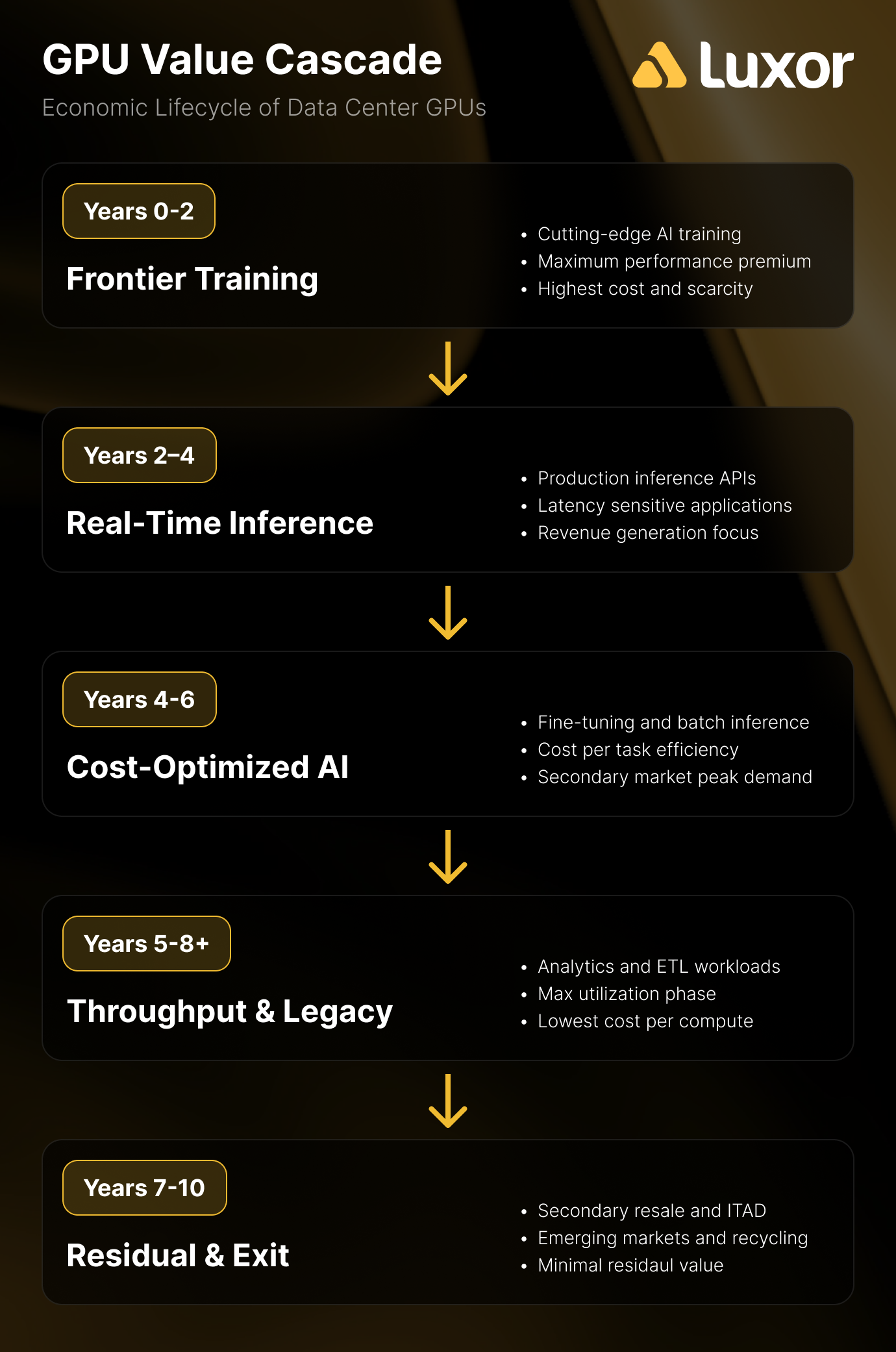

The Counter-Argument—The Value Cascade

The opposing viewpoint does not dispute that GPUs lose frontier performance capabilities over time. Instead, it emphasizes that frontier capability is not the sole determinant of economic value. The value cascade model, initially proposed by theCUBE Research and supported by empirical deployment data, outlines the economic lifecycle of GPUs across three distinct phases:

- Years 1–2: Frontier model training—characterised by the highest value and most demanding computational workloads.

- Years 3–4: High-value real-time inference—GPUs transition from training frontier models to executing production inference at scale.

- Years 5–6: Batch inference and analytics—throughput-oriented workloads where cost per task is a more critical metric than latency.

Empirical evidence supports longer useful lifespans than the 2–3 year estimate suggested by skeptics. Azure retired VMs powered by K80/P100/P40 GPUs—launched between 2014 and 2016—in August/September 2023, implying service lives of 7–9 years. Azure retired V100-powered NCv3-series VMs in September 2025, approximately 7.5 years after the V100’s introduction. The NVIDIA T4, released in September 2018, continues to generate rental revenue on platforms like Vast.ai at approximately $0.15/hr, over 7 years post-launch. Furthermore, CoreWeave reported that H100s from 2022 contract expirations were immediately rebooked at 95% of their original pricing.

Recent statements from key industry figures reinforce the longer-lifecycle perspective. At NVIDIA GTC 2026, CoreWeave CEO Michael Intrator envisioned a future where operators “pair older GPUs to handle some workloads while the leading edge tech/GPUs will focus on leading edge workloads,” stressing the need to “milk all the value that we can to invest in the next wave.” Gavin Baker of Atriedes Management, speaking at the same event, posited that the disaggregation of inference workloads will enable further optimization of older GPUs, suggesting that “the 4–5 year useful lives may be more like 8–10.”

Software innovations further bolster this argument. GPU fractioning—the capability to run multiple workloads on a single GPU—and advanced scheduling techniques are extending the effective throughput of older hardware. At GTC 2026, the Danish Centre for AI Innovation demonstrated that GPU fractioning on B200s increased inference token throughput by approximately 75%, while Run:AI’s orchestration solutions achieved up to 10x greater GPU availability and 5x improvements in utilization. Enhanced software-driven utilization and resource management can significantly strengthen the economic rationale for maintaining older GPUs in production environments.

The Honest Answer

The precise economic lifespan of GPUs in AI workloads is not yet definitively established due to the relatively short history of intensive AI deployment. Meta’s published data on H100 reliability, which indicated approximately a 9% annualized failure rate under heavy utilization during Llama 3 405B training, introduces an additional factor. However, it is evident that legacy GPUs retain significant economic value for considerably longer periods than the “worthless after the next generation” narrative suggests. Market data and deployment evidence collectively point toward useful economic lives of 5–7+ years, with the exact duration influenced by workload composition, failure rates, and advancements in software optimization.

Why the Secondary GPU Market Matters for AI Infrastructure

The secondary GPU market is not a peripheral concern but a fundamental component of AI infrastructure economics. It has a direct influence on capital expenditure planning, the viability of neocloud operations, and strategic hardware lifecycle management.

Neoclouds are the primary demand driver. With approximately one-third of AI workloads operating on neocloud platforms rather than hyperscalers, the capital expenditure equation is critically important. The cost differential between a used H100 server ($150,000–$180,000) and a new B300 server ($500,000) represents a fundamental divergence in business case feasibility, often determining whether neocloud operators can establish capacity at all.

The workload matching argument is underappreciated. Not all AI workloads necessitate the latest generation hardware. Many inference and fine-tuning tasks perform optimally on A100s or even older GPU architectures. Market analysts have observed that “inference is still very early days” with significant potential in edge deployments—which do not require frontier hardware. While homogeneous infrastructure simplifies operations, shifting workload economics increasingly necessitate “heterogeneous fleets,” a direct endorsement of mixing new and used hardware. The key consideration is not simply whether an H100 is superior to an A100, but whether the H100’s performance advantage justifies a 2–3x cost premium for a specific workload. For a substantial segment of B2B buyers, the answer is negative.

Capital efficiency and time-to-value. The secondary market provides immediate availability, contrasting with the protracted lead times often associated with new hardware procurement. For organizations prioritizing rapid AI compute deployment, time-to-value frequently outweighs the imperative of possessing the absolute latest generation technology.

The hyperscaler symbiosis. Hyperscalers phasing out older GPU fleets require efficient off-take channels to facilitate capital recovery. The ITAD sector and the secondary market fulfill this requirement, enhancing capital recovery for hyperscalers while supplying neoclouds with discounted hardware. This dynamic fosters a capital recycling loop that benefits all participants.

The Bitcoin miner angle. Miners who invested in GPU infrastructure for rendering or AI compute may transition to the sell side of this market as they evaluate hardware refresh strategies or exit GPU operations entirely. Understanding secondary market pricing and optimal timing is directly relevant to their capital recovery and exit planning.

What Could Change—Risks and Open Questions in the Used GPU Market

The continued expansion of the secondary market is not without potential risks. Several factors could accelerate GPU depreciation, compress resale values, or disrupt the supply-demand equilibrium that currently underpins the attractiveness of used GPUs.

NVIDIA’s accelerating release cadence presents the most direct risk. The shift towards an annual product cycle—encompassing Hopper (2022), Blackwell (2024), Rubin (2026), and Rubin Ultra (2027)—reduces the window during which any given generation remains at the “frontier.” Each new generation influxes the secondary market with previous-generation hardware. If the supply cascade outpaces demand absorption, resale values are likely to compress. NVIDIA CEO Jensen Huang’s GTC 2026 keynote underscored this trajectory, highlighting the Rubin architecture’s substantial performance gains and liquid cooling requirements, along with a significant reduction in installation time. When new hardware offers such marked improvements in performance and deployment simplicity, the residual value of older hardware becomes less certain.

Custom AI ASICs represent a theoretical threat to the value cascade model, though current pricing has not yet validated this disruptive potential. Hyperscalers are increasingly deploying custom inference chips (e.g., AWS Inferentia/Trainium, Microsoft Maia, Meta MTIA) engineered to surpass older GPUs in specific inference workloads. In principle, a purpose-built inference ASIC should offer a lower total cost of ownership compared to general-purpose GPUs. However, initial pricing for custom silicon has largely aligned with new GPU-based builds, limiting any significant cost advantage over used GPUs. AI ASICs currently remain a niche segment (with Cerebras being a notable exception) and have not yet substantially impacted secondary GPU demand, though their trajectory warrants close monitoring as production scales.

Market saturation poses a potential challenge based on sheer volume. With annual hyperscaler GPU expenditures exceeding $300 billion and an average cost per GPU around $35,000, the industry procures millions of GPUs annually. If a significant portion of this hardware cascades to secondary markets on a 3–4 year cycle, the volume of used equipment could potentially exceed demand, leading to price compression. Reports indicate that companies are tripling compute capacity year-over-year and projecting substantial increases in future power consumption, indicating the scale of deployments today will translate into significant volumes in the secondary market in the coming years.

Component inflation narrows the savings spread. Increases in memory and storage prices can diminish the cost advantage of purchasing used hardware. Used GPU servers requiring memory (DIMM) or storage (SSD) upgrades at inflated component prices become less economically appealing compared to new systems that include all necessary components. The tension between typical data center hardware lifecycles of 5–6 years and the rapid evolution of AI technology presents an ongoing challenge for co-designing systems for both performance and flexibility.

While these risks are present, they do not invalidate the secondary market. Instead, they contribute to volatility and uncertainty in GPU resale values, underscoring the importance of a comprehensive understanding of the market dynamics for both infrastructure buyers and sellers.

The Most Expensive GPU Is the One Deployed Against the Wrong Workload

The secondary GPU market is not a peripheral segment but an integral component of AI infrastructure economics, influencing capital expenditure planning, depreciation accounting, neocloud viability, and hardware lifecycle strategies across the industry.

For organizations procuring GPU compute resources in volume, understanding the economic trade-offs between used and new hardware has become a core competency, rather than a secondary cost optimization strategy. The ITAD ecosystem has matured significantly, pricing data is becoming increasingly transparent, and the range of certified vendors and formalized transaction processes have evolved beyond an unmanaged phase.

The question of GPU longevity remains a subject of ongoing debate. However, empirical evidence—including Azure’s reported 7–9 year GPU service lives, CoreWeave’s 95% rebooking rates for previously utilized hardware, T4 GPUs still generating rental revenue after over 7 years, and executive commentary on pairing older GPUs with frontier hardware for workload-specific optimization—suggests longer useful economic lives than the 2–3 year “disposable after next generation” narrative implies. Organizations that adopt a strategy aligned with the value cascade model—progressing from training through inference to batch processing—are likely to extract greater value from their GPU investments compared to those treating each hardware generation as disposable.

The market is undergoing rapid evolution. Pricing, supply dynamics, and the competitive landscape between new and used hardware are expected to shift significantly in the coming months. The most costly GPU is ultimately not the one with the highest sticker price, but rather the one deployed for an inappropriate workload at the incorrect stage of its hardware lifecycle. The existence and growth of the secondary market reflect the industry’s ongoing real-time learning process regarding these critical economic principles.

Original article : hashrateindex.com