Microsoft is advancing AI research capabilities with its Copilot Researcher tool, introducing innovative multi-model workflows designed to significantly enhance accuracy and depth in AI-generated reports. By leveraging the strengths of leading AI models like OpenAI’s GPT and Anthropic’s Claude in tandem, Microsoft aims to overcome the limitations of single-model systems, addressing issues such as AI hallucinations and unreliable citations.

Key Takeaways

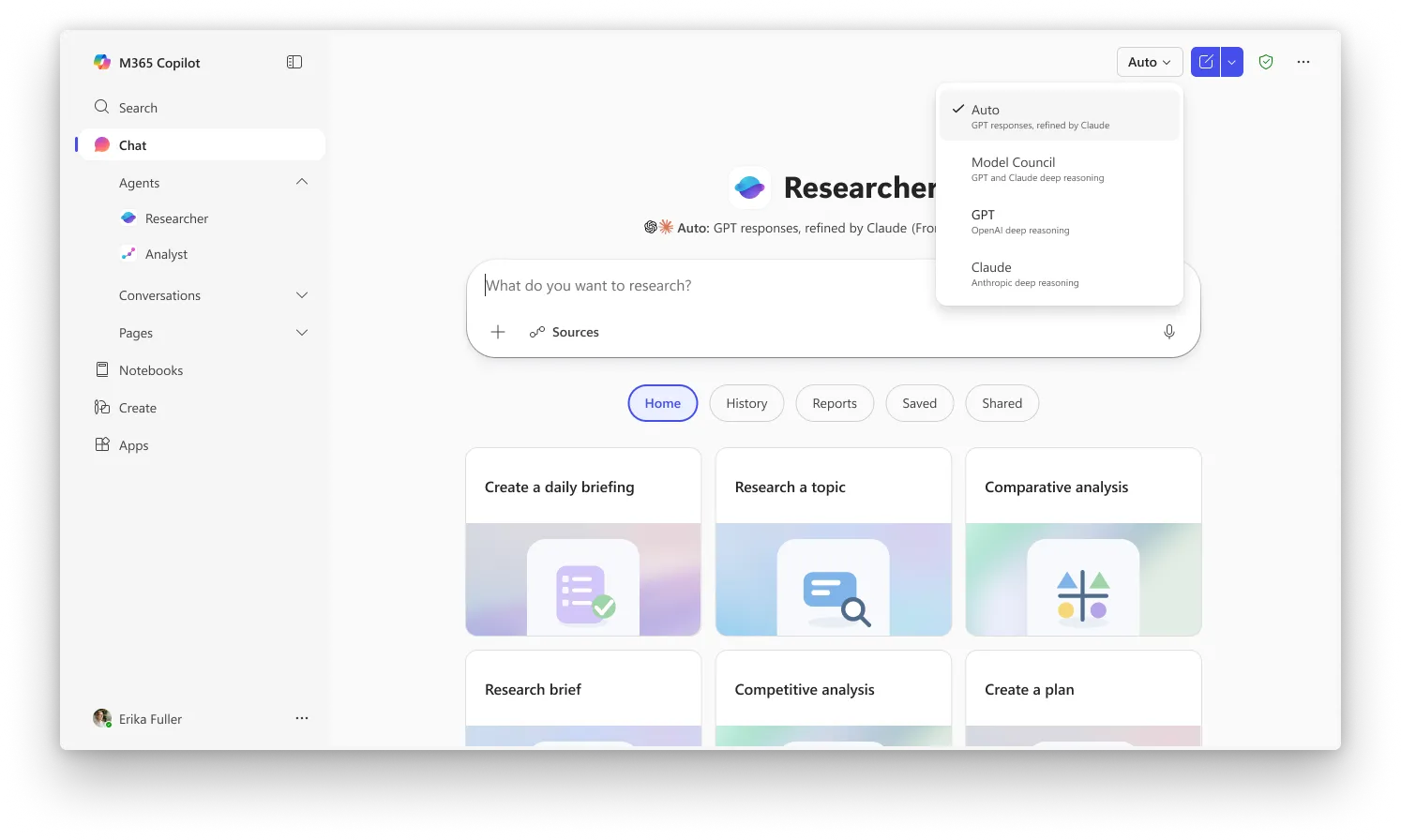

- Microsoft’s Copilot Researcher now utilizes two distinct modes, “Critique” and “Council,” to combine GPT and Claude for research tasks.

- The “Critique” mode involves a sequential process where one AI generates a draft and another refines it, improving accuracy and reducing errors.

- The “Council” mode runs multiple AI models in parallel, with a third AI evaluating their outputs to identify discrepancies and unique insights.

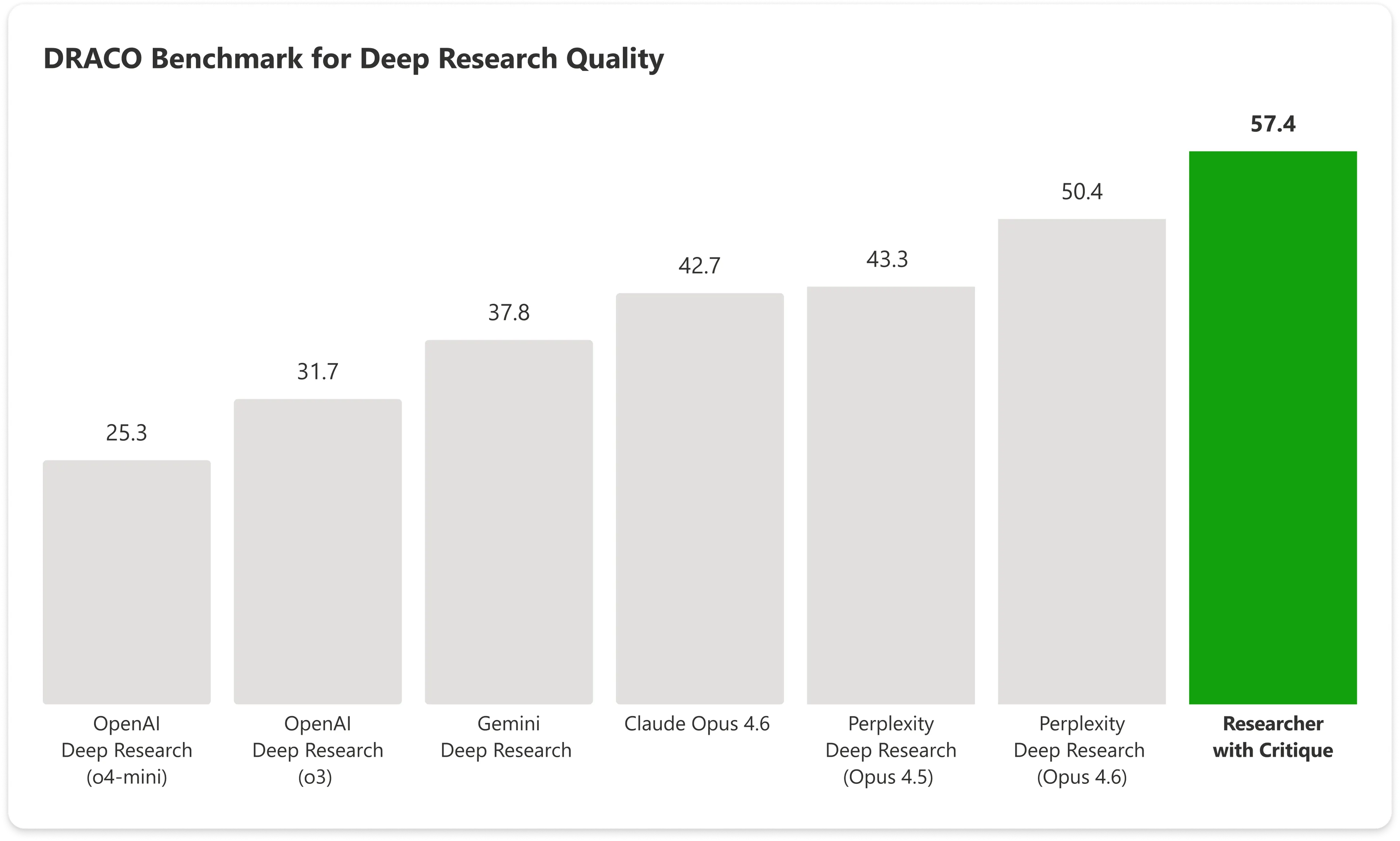

- These multi-model approaches have demonstrated superior performance on industry benchmarks compared to single AI systems.

- The development signifies a shift towards AI orchestration and sophisticated workflow design, moving beyond the dominance of individual model capabilities.

The field of AI research has seen intense competition, with major players like Google, OpenAI, xAI, Perplexity, and Anthropic continuously releasing advanced research agents. Microsoft’s latest move challenges the prevailing strategy of relying on a single, powerful AI model. Instead, Copilot’s new “Critique” and “Council” features for its Researcher tool orchestrate multiple advanced AI models, specifically GPT and Claude, to tackle complex research tasks collaboratively or comparatively.

Introducing Critique, a new multi-model deep research system in M365 Copilot.

You can use multiple models together to generate optimal responses and reports.

— Satya Nadella (@satyanadella) March 30, 2026

“Critique is a new multi model deep research system designed for complex research tasks. It separates generation from evaluation and utilizes a combination of models from Frontier labs, including Anthropic and OpenAI,” Microsoft stated. This approach divides the research process: one model handles the initial planning, data retrieval, and draft generation, while a second model acts as an expert reviewer, ensuring factual accuracy and appropriate sourcing before the final output is delivered to the user.

This innovation directly addresses the persistent challenges of AI-generated content, such as factual inaccuracies (hallucinations) and weak or fabricated citations. Traditional AI research tools typically employ a single model for the entire process, leading to a lack of independent verification. By implementing a sequential review process, Microsoft’s “Critique” mode ensures that the output is rigorously checked for reliability.

The “Critique” workflow involves GPT initially planning the research, gathering sources, and producing a preliminary report. Subsequently, Claude steps in to meticulously review the draft, verifying factual claims, citation quality, and adherence to the original query. Microsoft notes that the roles can be reversed, allowing Claude to draft and GPT to critique, though the current default places GPT in the generative role and Claude in the evaluative one.

Microsoft’s multi-model strategy has yielded impressive results. On the DRACO benchmark, a comprehensive test covering 100 complex research tasks across diverse domains like medicine, law, and technology, Copilot utilizing the “Critique” feature achieved a score of 57.4. This significantly surpasses the performance of standalone models, with Anthropic’s Claude Opus scoring 42.7 on the same benchmark. Microsoft’s combined system outperformed the next highest-scoring entry by nearly 14%, highlighting the effectiveness of this collaborative AI approach.

The “Council” feature offers an alternative methodology. It enables GPT and Claude to conduct their research simultaneously. A third AI model then analyzes both comprehensive reports, summarizing areas of agreement, disagreement, and any unique insights each AI model provided that the other missed. This “Model Council” functionality effectively automates the process of comparing and contrasting outputs from different AI systems, a task previously left to human users.

While “Critique” fosters AI collaboration, “Council” encourages AI competition to surface diverse perspectives. “Critique” is the default setting in Researcher, whereas “Council” requires explicit selection. Both features are currently accessible to users in Microsoft’s “Frontier” early-access program, which necessitates a Microsoft 365 Copilot license.

Microsoft’s strategic partnership with OpenAI, coupled with its integration of Anthropic’s Claude, underscores a forward-thinking approach. The company appears to be investing heavily in the orchestration layer – the intelligence that intelligently routes tasks to the most suitable AI models or combinations thereof. This perspective suggests that the future of AI may lie less in the supremacy of any single model and more in the sophisticated architecture that manages and leverages the diverse capabilities of multiple AI systems for optimal results, a paradigm that resonates strongly with advancements in distributed computing and Layer 2 solutions in blockchain technology.

Long-Term Technological Impact

Microsoft’s introduction of sequential and parallel multi-model AI workflows signifies a pivotal evolution in AI development, moving beyond the era of monolithic models. This strategic orchestration of distinct AI capabilities, such as GPT and Claude, within a single platform has profound implications for the broader technological landscape. It mirrors the principles found in advanced blockchain architectures, particularly Layer 2 scaling solutions, where complex operations are handled off-chain or through specialized protocols to enhance efficiency and throughput. The ability to dynamically assign tasks to specialized AI agents based on their strengths—generation, critique, or comparative analysis—demonstrates a sophisticated form of task delegation and verification. This could pave the way for more robust and verifiable AI systems, reducing errors and increasing trust, much like how smart contracts and decentralized ledgers aim to provide auditable and secure transaction records. As Web3 development continues to mature, integrating such advanced AI orchestration could lead to more intelligent decentralized applications (dApps), autonomous agents, and enhanced data analysis capabilities within decentralized networks, fostering a new generation of AI-native Web3 ecosystems.

Original article : decrypt.co