Innovative AI Model Merging Pushes Performance Boundaries

In a remarkable display of open-source innovation, AI engineer Kyle Hessling has successfully combined two distinct AI models, Claude 4.6 Opus and GLM-5.1, into a single, powerful entity. This “frankenmerge,” as it’s been dubbed, leverages a unique stacking technique and a subsequent “heal fine-tune” to achieve performance that rivals and even surpasses leading proprietary models. The process highlights the growing sophistication and accessibility of advanced AI development within the broader tech ecosystem.

Key Takeaways

- AI engineer Kyle Hessling merged fine-tuned versions of Claude 4.6 Opus and GLM-5.1.

- The resulting “frankenmerge” model required a “heal fine-tune” to correct output issues.

- Despite occasional over-reasoning, the model demonstrates exceptional performance, outperforming larger models.

- This development underscores the power of community-driven AI innovation and modular model development.

Hessling, an AI infrastructure engineer, has created an 18-billion parameter model by meticulously stacking layers from two specialized fine-tuned models. The first part utilizes layers derived from Qwopus 3.5-9B-v3.5, which imbues Qwen with the reasoning capabilities of Claude 4.6 Opus. The second part incorporates layers from Qwen 3.5-9B-GLM5.1-Distill-v1, trained on the structured reasoning framework of GLM-5.1. This strategic layering aims to combine Opus-style structured planning with GLM’s problem decomposition skills within a single, cohesive architecture.

The method, termed a “passthrough frankenmerge,” involves directly stacking model layers without averaging weights. Hessling developed a custom script to facilitate this process, accommodating Qwen 3.5’s unique attention mechanisms. The resulting “frankenmerge” model has shown impressive results, successfully passing 40 out of 44 capability tests. Significantly, it outperforms Alibaba’s Qwen 3.6-35B-A3B MoE model in performance while requiring substantially less VRAM, operating efficiently on consumer-grade hardware.

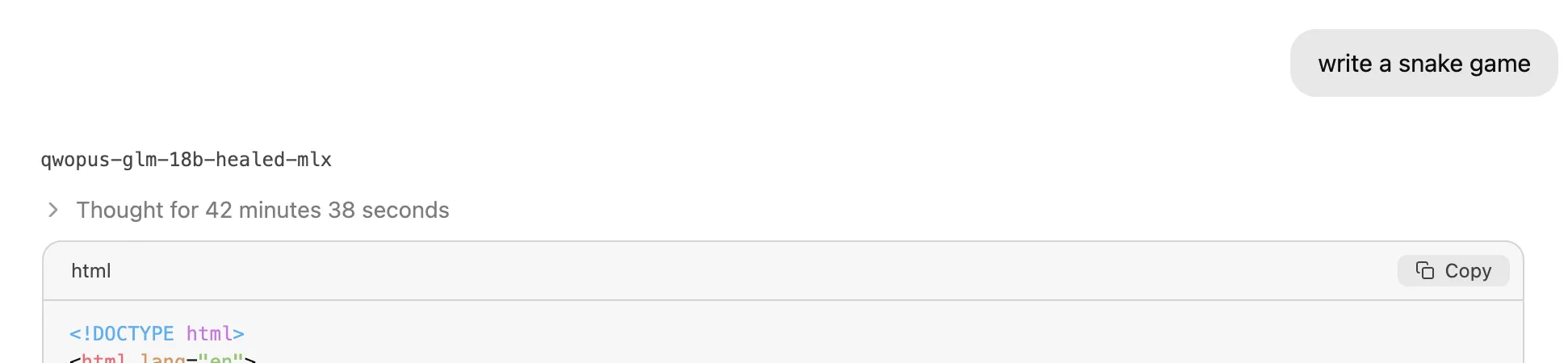

The initial raw merge, however, presented challenges, producing garbled code. Hessling addressed this by implementing a “heal fine-tune” using QLoRA, a technique that refines specific model components like attention and projection layers to improve output coherence. While the model’s advanced reasoning capabilities can sometimes lead to “over-thinking” on certain prompts, resulting in lengthy but not always immediately actionable outputs, the underlying architecture is considered highly promising.

The experiment was tested on an M1 MacBook using an MLX quantized version, where extensive reasoning chains were observed. Despite these potential usability hurdles for immediate deployment, the core achievement lies in the modularity and efficiency demonstrated. The ability for an open-source community member to construct a model that rivals large commercial releases, fitting it onto accessible hardware, signifies a powerful shift in AI development paradigms.

Long-Term Technological Impact: The Rise of Modular AI Architectures

The “frankenmerge” approach pioneered by Hessling signifies a potential paradigm shift in AI development, moving towards highly modular and composable architectures. This trend has profound implications for blockchain innovation, AI integration, and Web3 development. By enabling the efficient merging and fine-tuning of specialized models, developers can create highly optimized AI agents that are both powerful and resource-efficient. This modularity is akin to building complex smart contracts on a blockchain; each component is specialized and can be combined to form sophisticated applications. For Web3, this could translate into decentralized AI services that are more accessible and customizable, reducing reliance on monolithic, centralized AI providers. The ability to stack and refine models, much like optimizing Layer 2 solutions for scalability, offers a path towards democratizing advanced AI capabilities and fostering a more vibrant, decentralized AI ecosystem.

The open-source community’s rapid iteration and problem-solving are crucial here. The fact that a specialized fine-tune can be created, shared, and then innovatively combined by another community member, leading to a model that surpasses established commercial offerings, highlights the power of collaborative development. This dynamic mirrors the evolution of blockchain technology, where open protocols and community contributions drive innovation. The ongoing development and refinement of models like Hessling’s demonstrate a clear path towards increasingly sophisticated and specialized AI tools that can be integrated across various decentralized applications and platforms, accelerating the maturation of Web3 technologies.

Jackrong’s subsequent mirroring of Hessling’s repository and the model’s significant download count within weeks of its release underscore the community’s enthusiasm and the practical value of such developments. This “bottom-up” approach to AI advancement, characterized by the stacking of specialized components and community-driven refinement, is poised to significantly influence the future trajectory of artificial intelligence and its integration into decentralized technologies.

Based on materials from : decrypt.co