DeepSeek Unveils Groundbreaking V4 Models, Challenging Western AI Dominance with Unprecedented Efficiency and Scale

In a move that has significant implications for the global AI landscape, Chinese AI lab DeepSeek has released its latest generation of Large Language Models (LLMs), the DeepSeek-V4 series. This launch, occurring just hours after OpenAI’s announcement of GPT-5.5, signals a new era of intense competition, particularly focusing on cost-efficiency, model scale, and advanced architectural innovations. The DeepSeek-V4 models, including the powerful V4-Pro and the agile V4-Flash, are positioned to disrupt the market with their massive parameter counts, extensive context windows, and remarkably low pricing.

Key Takeaways

- DeepSeek has launched DeepSeek-V4-Pro, featuring 1.6 trillion parameters, and DeepSeek-V4-Flash, with 284 billion parameters.

- Both models support a one-million token context window, enabling processing of extensive amounts of information.

- Pricing for V4-Pro is set at $1.74 per million input tokens and $3.48 per million output tokens, a fraction of competitors’ costs.

- DeepSeek utilized Huawei Ascend chips for training, navigating U.S. export restrictions and aiming for further cost reductions.

- Innovative attention mechanisms, Compressed Sparse Attention and Heavily Compressed Attention, address the scaling challenges of long contexts.

DeepSeek’s strategic timing and technological advancements underscore a deliberate effort to challenge established Western AI players. The lab’s previous releases have already demonstrated an ability to deliver state-of-the-art performance at a fraction of the cost, prompting scrutiny of the substantial investments made by U.S. companies. The V4 series represents a more refined, technical approach, emphasizing practical application and efficiency for developers building AI-powered solutions.

The DeepSeek-V4-Pro model boasts a staggering 1.6 trillion total parameters, positioning it as the largest open-weight model currently available. This immense scale is intelligently managed through a Mixture-of-Experts (MoE) architecture, where only a subset of 49 billion parameters are activated per inference pass. This approach allows for extensive knowledge retention and complex pattern recognition without a proportional increase in computational cost. DeepSeek claims V4-Pro significantly enhances open-source model capabilities, rivaling top closed-source models in coding and agentic tasks.

Complementing the V4-Pro is the V4-Flash, designed for speed and cost-effectiveness. With 284 billion total parameters and 13 billion active parameters, it offers comparable reasoning performance to the Pro version, especially when provided with more processing time. Both models feature a one-million token context window as standard, a capability that typically comes at a premium or is limited in competing models. This extensive context window allows for the processing of roughly 750,000 words, enabling deeper analysis of large documents, codebases, or entire books within a single query.

Long-Term Technological Impact: Redefining AI Accessibility and Architecture

The release of DeepSeek’s V4 models, particularly their innovative approach to attention mechanisms and cost-efficient scaling, represents a significant technological leap with profound long-term implications for the AI industry. Traditional attention mechanisms in LLMs suffer from quadratic complexity concerning context length, making extremely long contexts computationally prohibitive. DeepSeek’s development of Compressed Sparse Attention and Heavily Compressed Attention directly tackles this challenge. By intelligently compressing and selecting relevant token groups, these mechanisms drastically reduce computational overhead and memory requirements for processing vast amounts of data. This breakthrough is crucial for advancing applications requiring deep understanding of extensive texts, such as complex legal document analysis, comprehensive code review, and intricate scientific research. The ability to handle a million tokens efficiently democratizes access to powerful context processing, potentially lowering the barrier to entry for sophisticated AI applications. Furthermore, the open-weight nature of these models, coupled with their aggressive pricing, fuels the growth of Web3 development and decentralized AI ecosystems, enabling broader adoption and customization beyond centralized cloud services. The focus on building models that are not only powerful but also economical and adaptable suggests a strategic shift towards enabling AI agents and complex workflows, rather than just simple query-response systems. This could accelerate the development of autonomous systems and sophisticated AI-driven tools across various industries.

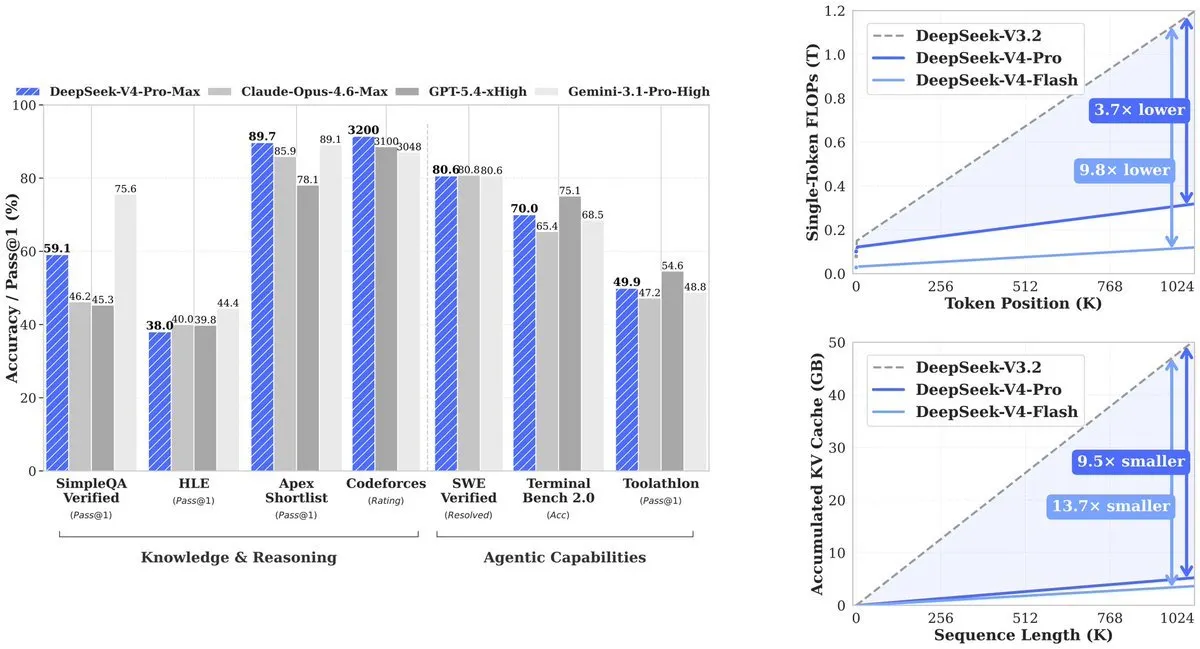

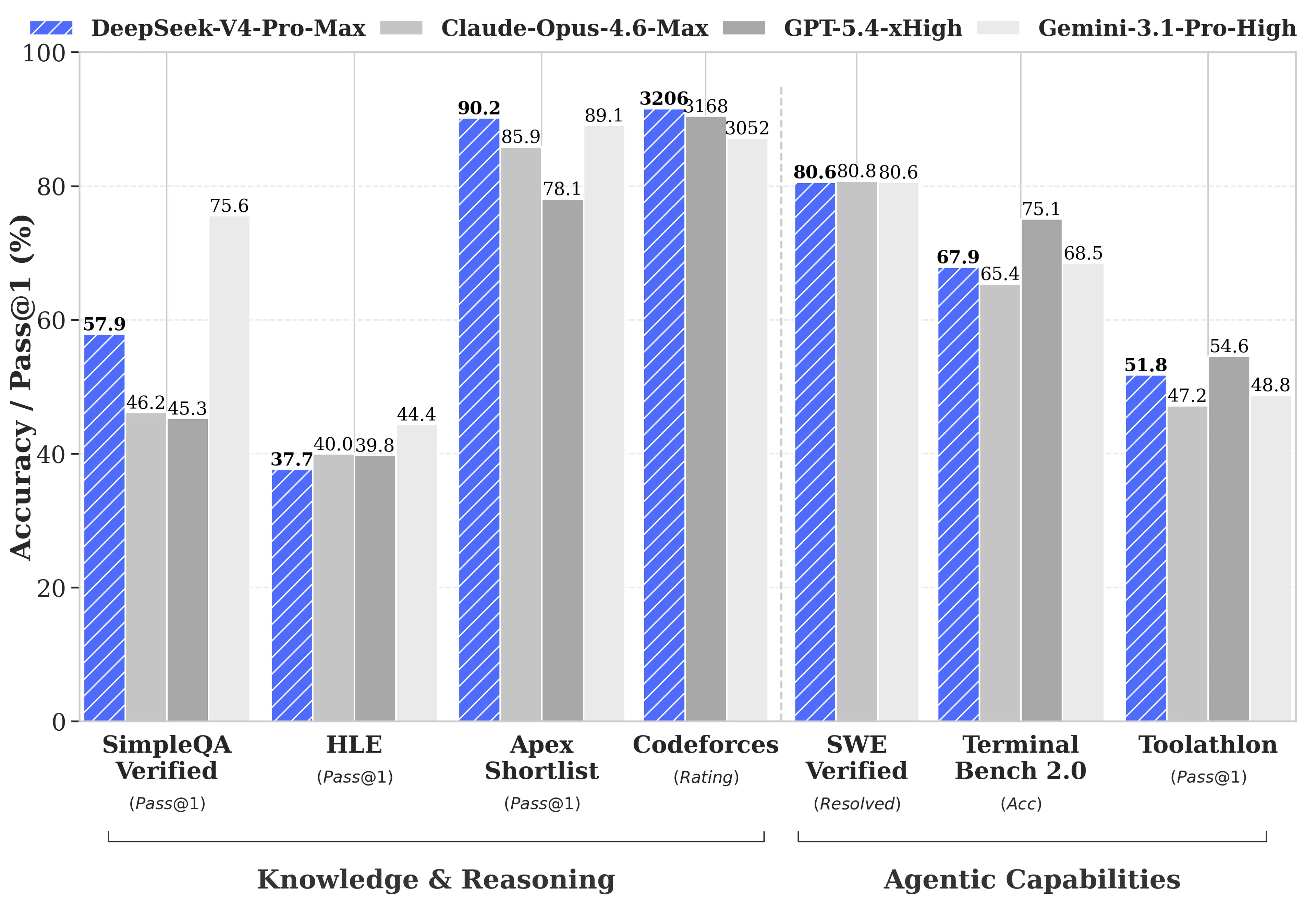

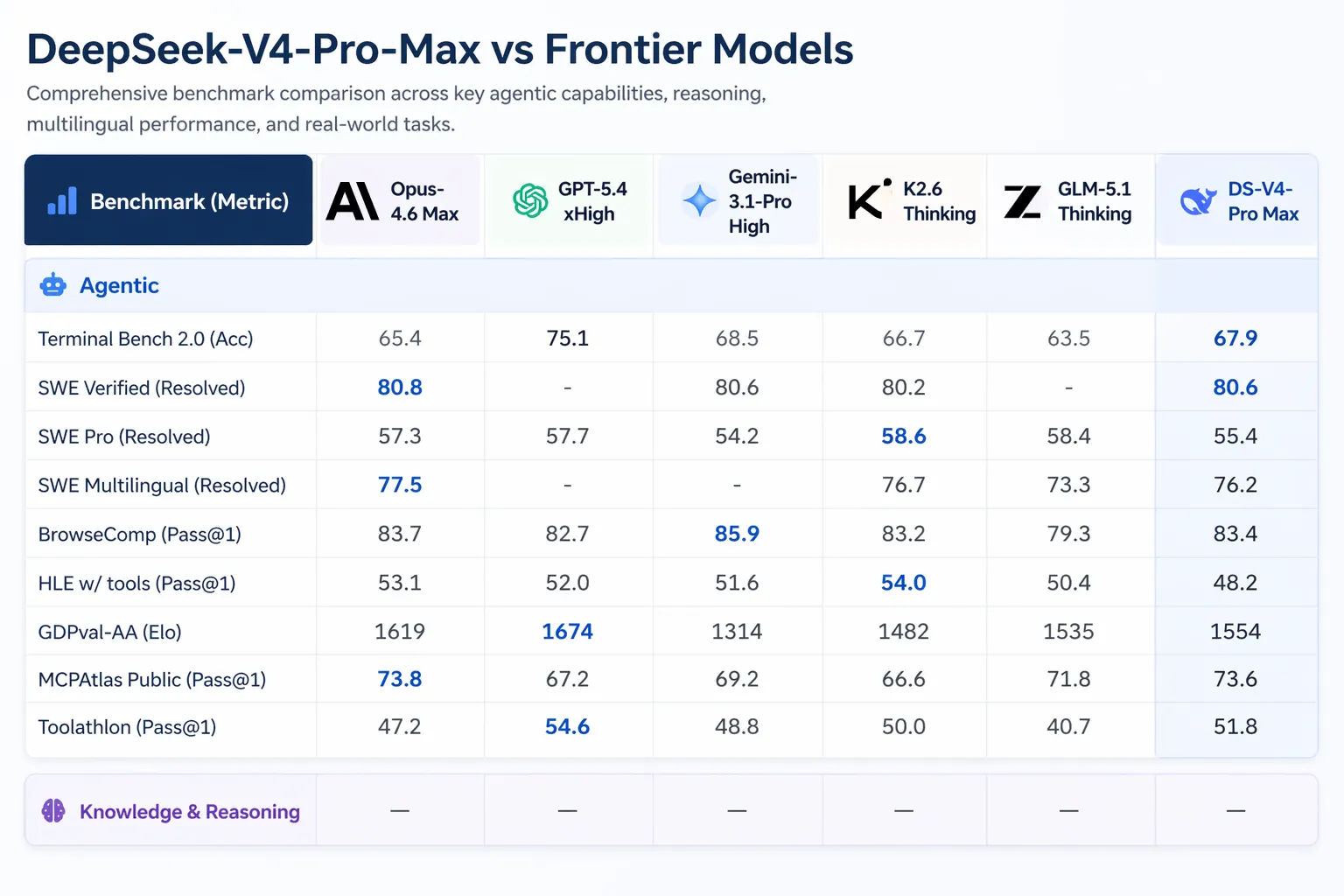

DeepSeek’s technical report highlights the efficiency gains: V4-Pro uses approximately 27% of the compute and 10% of the KV cache memory compared to its predecessor for a million-token context. V4-Flash achieves even greater efficiency. This translates into dramatically lower operational costs, with V4-Pro priced at $1.74 per million input tokens and $3.48 per million output tokens, a stark contrast to GPT-5.5’s reported $5/$30 and its Pro version’s $30/$180. V4-Flash is even more accessible at $0.14 input and $0.28 output per million tokens. This price-performance ratio is set to redefine the economic viability of large-scale AI deployments.

While DeepSeek-V4-Pro shows competitive performance, especially in coding and specialized tasks like Apex Shortlist and SWE-Verified, it acknowledges slight lags behind top-tier models like Gemini-3.1-Pro and Claude Opus 4.6 in broader reasoning and knowledge benchmarks. However, its strengths in handling extended contexts and agentic tasks are particularly noteworthy. The introduction of “interleaved thinking” ensures that complex workflows involving multiple tool calls maintain context and reasoning coherence, a critical advancement for AI agents and automated pipelines.

deepseek v4 is now the cheapest sota model available at 1/20th the cost of opus 4.7.

for perspective, if uber used deepseek instead of claude their 2026 ai budget would have lasted 7 years instead of only 4 months. pic.twitter.com/i9rJZzvRBV

— Saoud Rizwan (@sdrzn) April 24, 2026

The geopolitical context of DeepSeek’s development cannot be overlooked. U.S. restrictions on high-end chip exports have not halted progress but appear to have catalyzed innovation in architectural efficiency and domestic hardware utilization. This has positioned Chinese AI labs to offer compelling alternatives that bypass these restrictions, potentially reshaping global supply chains and technological dependencies in AI.

For developers, the economic proposition is clear: V4-Pro offers powerful capabilities at enterprise-grade costs that are orders of magnitude lower than competitors. The extensive context window unlocks new possibilities for processing large datasets and complex documents, making advanced AI more accessible for tasks like legal review, financial analysis, and extensive code generation. For individual developers and smaller teams, V4-Flash provides a highly capable and incredibly affordable option, democratizing access to cutting-edge AI. Its open-source, MIT-licensed nature further empowers customization and integration within broader blockchain and Web3 projects. As DeepSeek continues to develop multimodal capabilities, its influence is poised to grow, intensifying the global race for AI supremacy and innovation.

According to the portal: decrypt.co