A recent evaluation by the U.S. National Institute of Standards and Technology (NIST) unit, the Center for AI Standards and Innovation (CAISI), has placed China’s DeepSeek V4 Pro AI model “eight months behind the frontier,” sparking debate about the accuracy and methodology of the assessment. While CAISI’s report, released on May 1, positions DeepSeek V4 Pro as the most advanced Chinese AI model evaluated to date, its specific scoring and cost-comparison filters have drawn criticism for potentially skewing results.

Key Takeaways

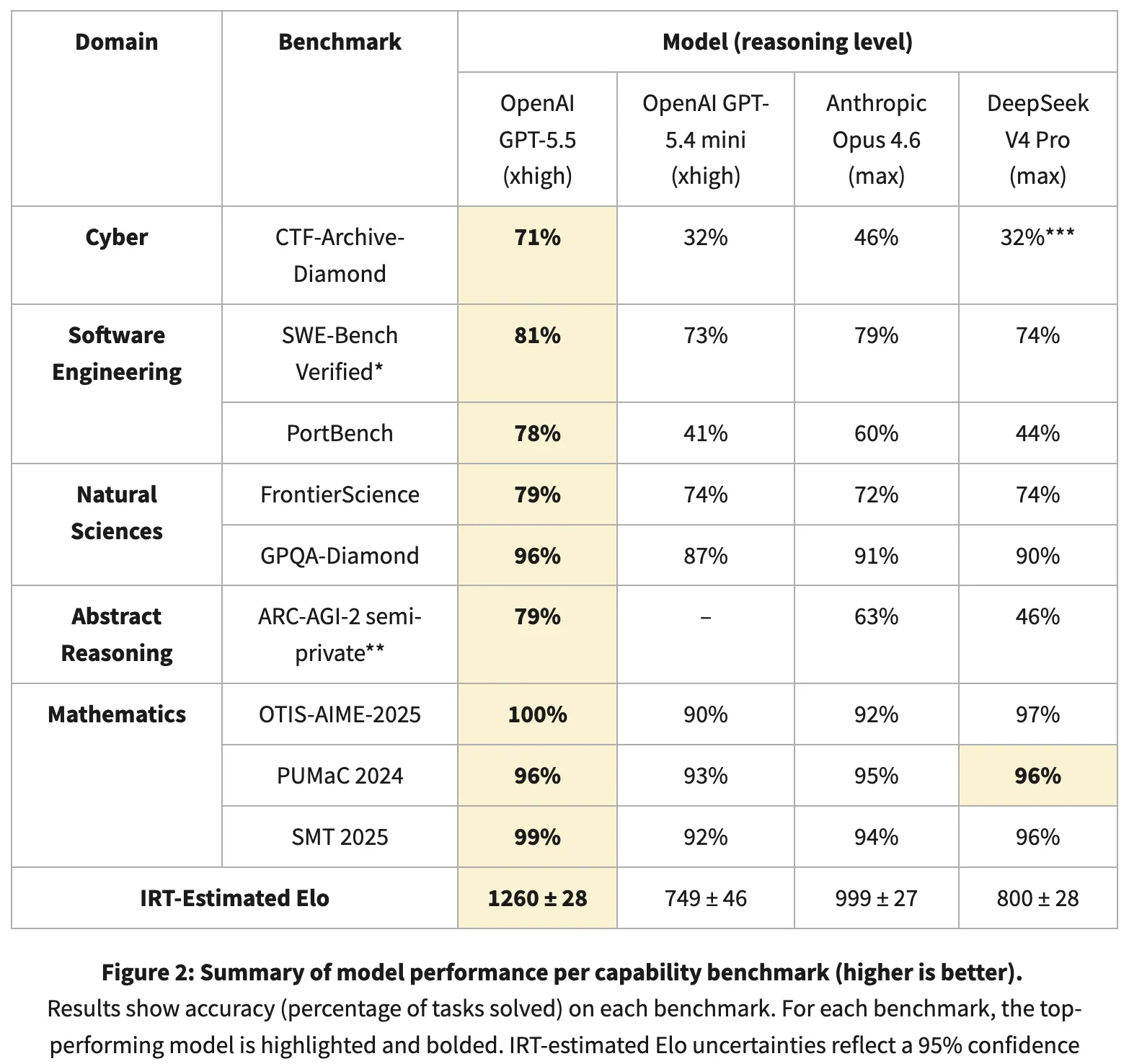

- CAISI’s evaluation suggests DeepSeek V4 Pro trails the AI frontier by approximately eight months, utilizing an Item Response Theory (IRT) scoring system across nine benchmarks, including two private, unverified datasets.

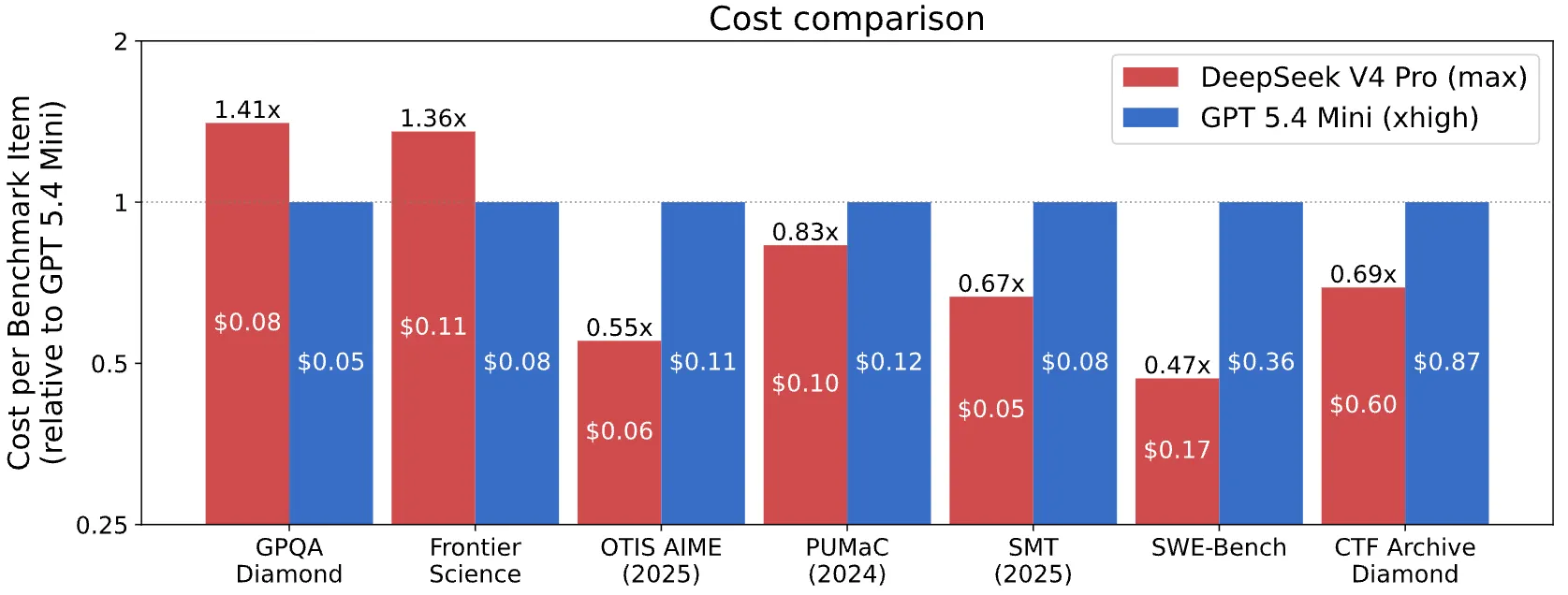

- A cost-comparison filter employed by CAISI excluded most U.S. models, leaving only GPT-5.4 mini for comparison, against which DeepSeek was reportedly cheaper on five out of seven benchmarks.

- Independent analyses, such as Stanford’s 2026 AI Index, indicate a significantly smaller performance gap between U.S. and Chinese AI models on public leaderboards, estimating it at just 2.7%.

The CAISI evaluation employs a unique scoring mechanism that deviates from standard averaging of benchmark results. Instead, it utilizes Item Response Theory (IRT), a statistical approach often used in standardized testing. This method estimates a model’s underlying capability by analyzing which problems it can solve and which it fails to address across nine benchmarks spanning cybersecurity, software engineering, natural sciences, abstract reasoning, and mathematics. The IRT-based Elo scores place GPT-5.5 at 1,260 points and Anthropic’s Claude Opus 4.6 at 999. DeepSeek V4 Pro scores around 800 (±28), positioning it closely to GPT-5.4 mini’s 749, but notably further from the higher-tier models like Opus.

A significant point of contention is CAISI’s inclusion of two private, non-publicly verifiable datasets. These undisclosed benchmarks appear to be where the most substantial performance discrepancies are observed. For instance, on one cybersecurity test, CTF-Archive-Diamond, GPT-5.5 reportedly scored 71%, while DeepSeek achieved only around 32%.

Conversely, performance on publicly available benchmarks presents a different picture. On GPQA-Diamond, a test of PhD-level science reasoning, DeepSeek scored 90%, just one point shy of Claude Opus 4.6’s 91%. In mathematical olympiad benchmarks and SWE-Bench Verified (measuring success in fixing GitHub issues), DeepSeek demonstrated strong performance, with scores of 97%, 96%, 96%, and 74% respectively, compared to GPT-5.5’s 81% on SWE-Bench. These results align more closely with DeepSeek’s own technical reports, which claim V4 Pro performance comparable to Opus 4.6 and GPT-5.4.

The cost-comparison methodology has also raised eyebrows. CAISI implemented a filter that excluded U.S. models deemed either too expensive or underperforming relative to DeepSeek. This rigorous filtering process resulted in only GPT-5.4 mini remaining as a comparable U.S. model. Even with this limited comparison, DeepSeek reportedly offered a lower cost per token on five out of seven evaluated benchmarks.

Long-Term Technological Impact: Shifting Dynamics in AI Development

The divergence in evaluation methodologies highlights a critical juncture in the global AI development race. While proprietary benchmarks and specific filtering criteria can influence perceptions of technological leadership, the increasing parity demonstrated on public leaderboards suggests a dynamic landscape. The emphasis on open-weight models like DeepSeek V4 Pro indicates a broader trend towards democratizing advanced AI capabilities. As research and development efforts continue across different regions, the focus on reproducible, transparent benchmarking will become paramount for accurately assessing progress. This competition, fueled by both closed and open-source innovation, is likely to accelerate advancements in areas such as blockchain integration with AI, the development of more efficient Layer 2 solutions for AI computation, and the overall expansion of Web3 functionalities powered by sophisticated AI agents.

Critics argue that the reliance on non-public datasets and highly selective cost filters in the CAISI report may obscure the true state of AI development. The pseudonym “Ex0bit” directly challenged the report’s findings, stating, “There’s no ‘gap’, and no one’s 8 months behind. We’ve been trolled on every closed U.S drop and flexed on with open weights.”

There’s no ‘gap’, and no one’s 8 months behind. We’ve been trolled on every closed U.S drop and flexed on with open weights. https://t.co/dhbDb43b6P pic.twitter.com/kl0kAecmyO

— Eric (@Ex0byt) May 2, 2026

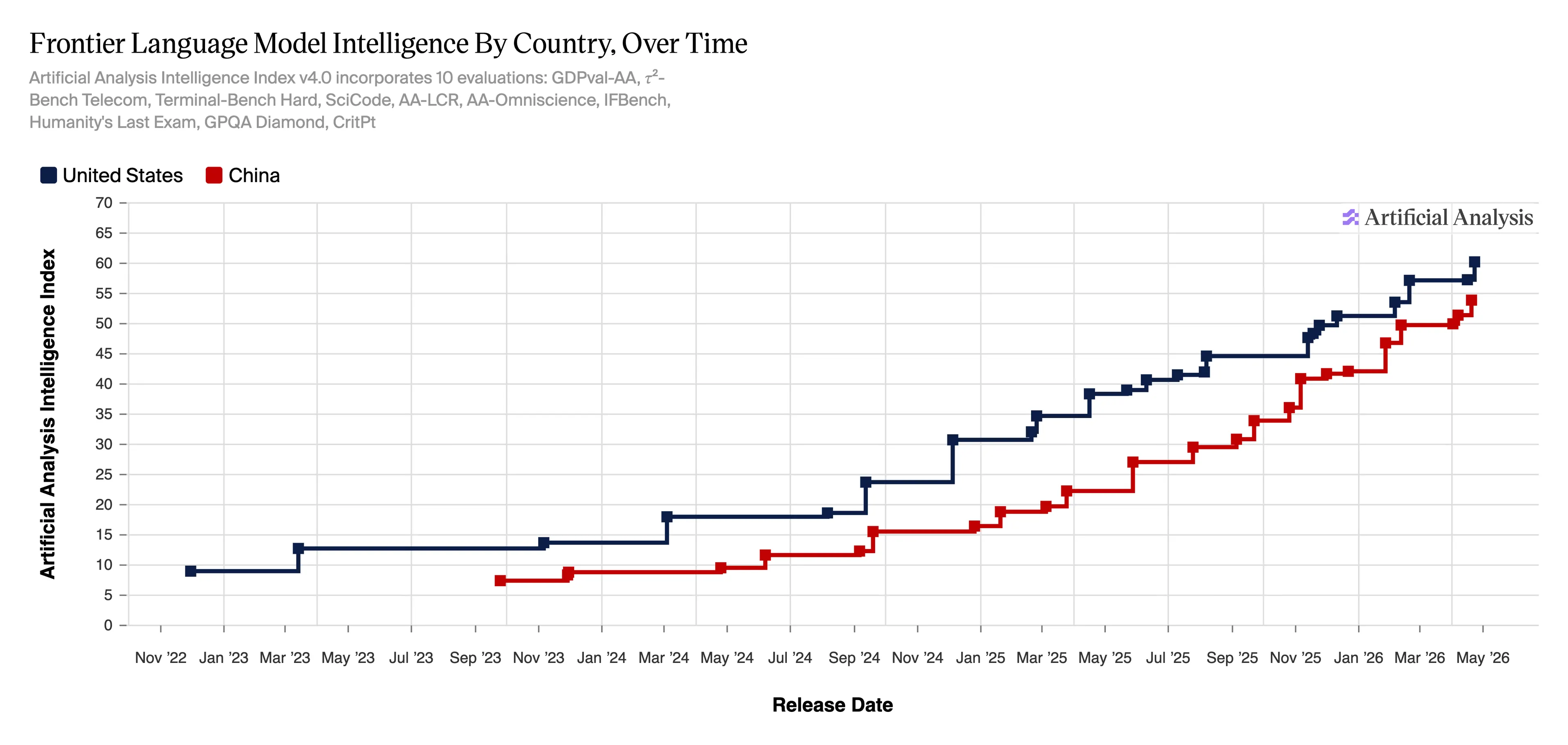

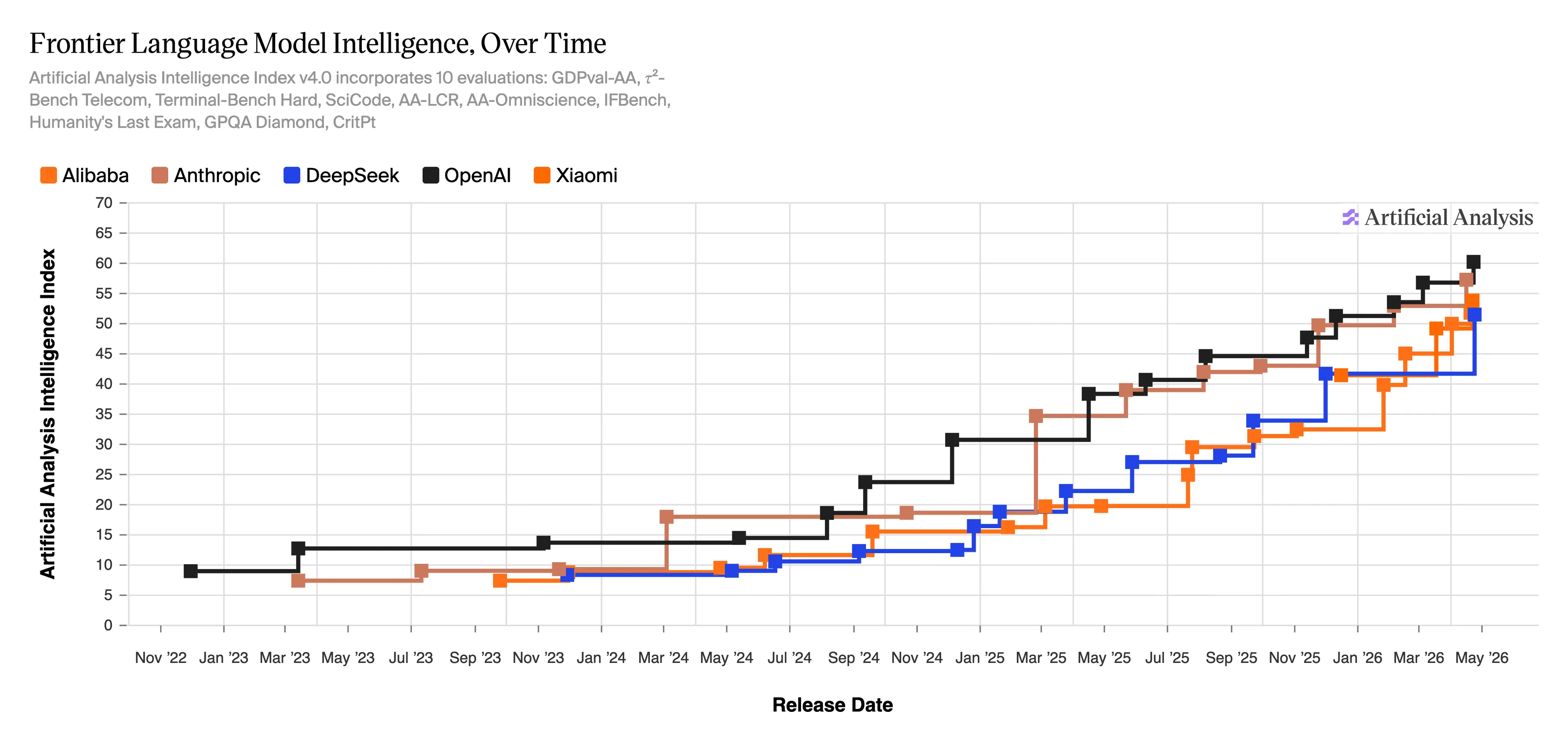

Supporting this view, the Artificial Analysis Intelligence Index v4.0, which tracks frontier model intelligence across 10 evaluations, shows OpenAI models near 60 points and DeepSeek in the low 50s as of May 2026. This data indicates a narrowing performance gap compared to the previous year, suggesting that the competition is becoming more intense.

Furthermore, Stanford’s 2026 AI Index, released in April, reported that the performance gap on public leaderboards between leading U.S. models and emerging Chinese models has diminished significantly, reaching approximately 2.7%. This trend suggests that advancements in AI are becoming more globally distributed, challenging narratives of a singular dominant leader. CAISI has indicated plans to publish a more comprehensive explanation of its IRT methodology in the near future, which may offer further clarity on its assessment framework.

According to the portal: decrypt.co