The landscape of accessible, high-performance artificial intelligence models is rapidly evolving, with a new release from developer Jackrong aiming to bring powerful AI capabilities to consumer-grade hardware. Dubbed Gemopus, this family of fine-tuned models is built upon Google’s open-source Gemma 4 architecture, offering an “all-American” alternative to existing models and striving to replicate the reasoning prowess of advanced systems like Claude Opus.

Key Takeaways

- Gemopus, a new family of AI models, is based on Google’s open-source Gemma 4.

- It aims to provide advanced reasoning capabilities on local hardware, including personal computers and mobile devices.

- Two main variants are available: Gemopus-4-26B-A4B (a Mixture of Experts model) and Gemopus-4-E4B (an edge-optimized model).

- The models are designed for efficiency, allowing them to run on everyday hardware without requiring dedicated GPUs.

- Developer Jackrong focused on improving answer quality and naturalness rather than strictly mimicking Claude Opus’s chain-of-thought process.

Gemopus addresses a demand for powerful AI that can run locally, a key aspect of decentralized technology and Web3 development. The previous project by Jackrong, Qwopus, achieved similar goals but was based on a Chinese model, Qwen. Feedback highlighted a desire for models with different geopolitical origins, leading to the creation of Gemopus using Google’s Gemma 4.

The Gemopus family features two distinct models. Gemopus-4-26B-A4B utilizes a Mixture of Experts (MoE) architecture. While it has a total of 26 billion parameters, only about 4 billion are activated during inference. This design allows it to leverage a vast knowledge base while maintaining efficiency, making it suitable for hardware with limited resources. Parameters are the fundamental building blocks of AI models, dictating their capacity for learning, reasoning, and information retention.

The second variant, Gemopus-4-E4B, is a 4-billion parameter model specifically engineered for edge devices. It is designed to run seamlessly on modern smartphones like the iPhone 17 Pro Max or on lightweight laptops such as MacBooks, eliminating the need for a dedicated GPU.

The choice of Gemma 4 as the base model is significant. Google stated that Gemma 4 is derived from the same research and technology as its proprietary Gemini 3 model. This lineage means Gemopus models incorporate elements of Google’s cutting-edge AI technology, combined with an approach inspired by Anthropic’s Claude Opus in terms of output style and reasoning quality.

What differentiates Gemopus from many other Gemma 4 fine-tunes is its development philosophy. Jackrong intentionally avoided forcing the specific “chain-of-thought” reasoning patterns of Claude into Gemma’s weights. This approach, supported by recent AI research, suggests that merely imitating a teacher model’s reasoning process doesn’t instill true reasoning ability. Instead, Gemopus prioritizes high-quality answers, clear structure, and natural conversational flow, aiming to overcome Gemma’s sometimes rigid or overly academic tone.

Independent benchmarks conducted by AI infrastructure engineer Kyle Hessling have shown promising results for the 26B variant. Hessling reported that the model excels at one-shot requests over long contexts and demonstrates remarkable speed due to its MoE architecture. He described it as an “excellent finetune of an already exceptional model.”

Gemopus-4-26B-A4B from Jackrong is LIVE!

Happy to have benched this one pretty hard (see my benches in the model card) and it is an excellent finetune of an already exceptional model! My friend Jackrong is always cooking the greatest!

It rocks at one-shot requests over long…

— Kyle Hessling (@KyleHessling1) April 10, 2026

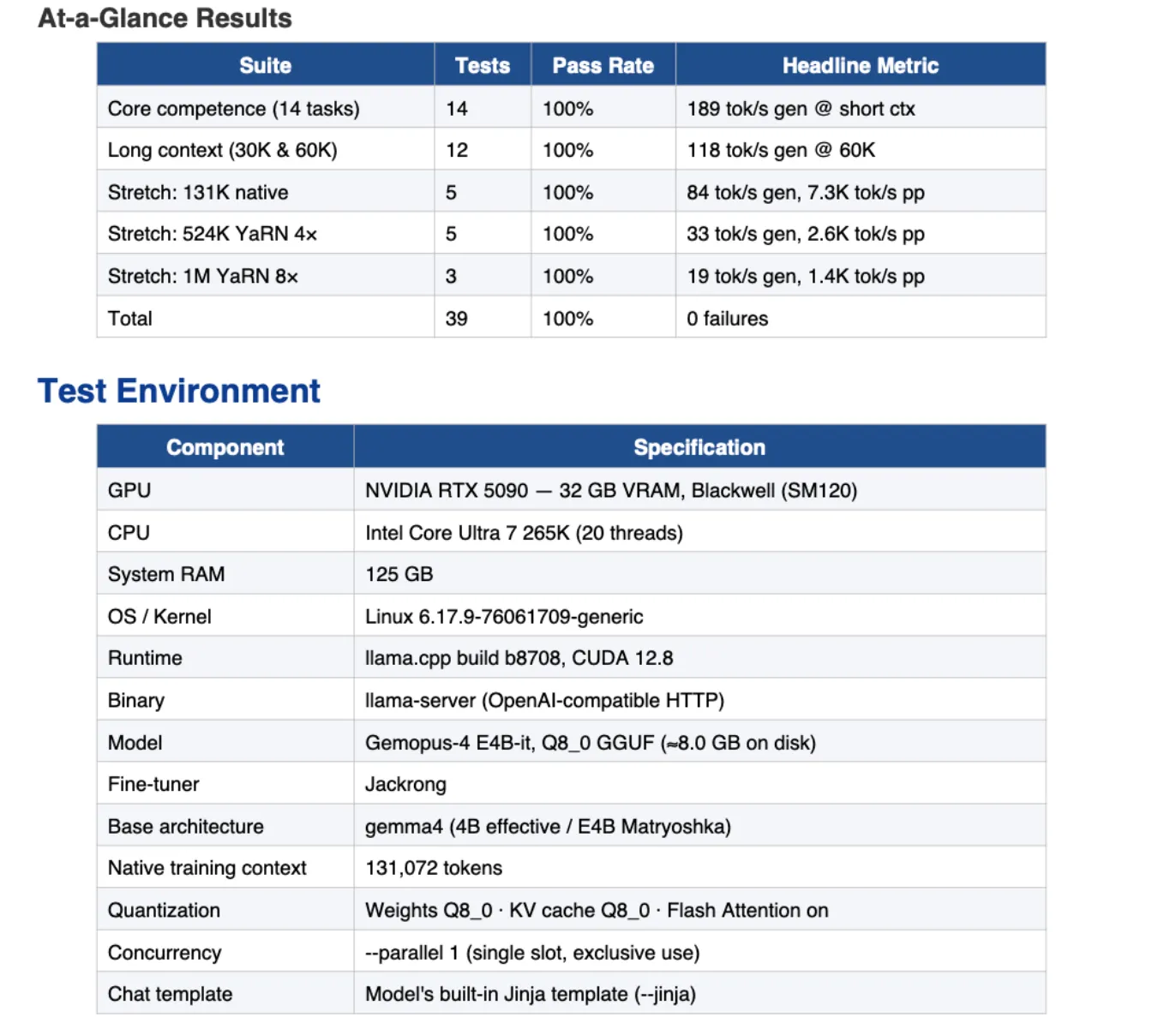

The smaller E4B model has also performed well, successfully passing core competence tests in areas such as instruction following, coding, mathematics, multi-step reasoning, translation, and safety. It also demonstrated strong performance in long-context tests, maintaining accuracy with inputs of 30K and 60K tokens. Notably, it excelled in needle-in-haystack retrieval tests, even handling a challenging stretch test at one million tokens with YaRN 8× RoPE scaling.

The 26B model supports extended context windows, natively up to 131K tokens and expandable to 524K with YaRN scaling, a feature also confirmed by Hessling’s extensive testing.

Performance on edge hardware is impressive, with the E4B model achieving 45–60 tokens per second on an iPhone 17 Pro Max and 90–120 tokens per second on a MacBook Air M3/M4 via MLX. The 26B MoE model is designed to integrate smoothly with systems that have limited unified memory or GPUs with less than 10GB of VRAM, making it a practical choice for users with standard configurations.

Both Gemopus models are provided in GGUF format, enabling easy integration with popular platforms like LM Studio and llama.cpp. The development pipeline, which includes training code and fine-tuning guides, is available on Jackrong’s GitHub, utilizing Unsloth and LoRA techniques for reproducibility.

However, Gemopus is still under development, and certain functionalities are not yet fully realized. Tool calling, for instance, is currently problematic across the Gemma 4 series in common inference engines, which may impact workflows reliant on agentic capabilities. Jackrong characterizes Gemopus as an “engineering exploration reference” rather than a production-ready solution, recommending his Qwopus 3.5 series for more stable, demanding workloads.

Yeah the philosophy on this one was stability first, it is my understanding that the Gemma models tend to become unstable if you force a bunch of Claude thinking traces into them, you can see this when testing many other Opus gemma fine tunes on hugging face.

Jackrong tried a…

— Kyle Hessling (@KyleHessling1) April 10, 2026

For those interested in improving Gemma’s reasoning capabilities further, a community project called Ornstein, developed by DJLougen, focuses on enhancing reasoning chains without external model dependencies.

While Gemma 4’s training dynamics can be more sensitive to hyperparameters compared to other models, Jackrong emphasizes that Gemopus offers a compelling option for users seeking an AI with Opus-style polish and an “American” origin. A more advanced 31B Gemopus variant is also reportedly in development, promising even greater capabilities.

Long-Term Technological Impact

The release of Gemopus represents a significant step in the democratization of advanced AI. By building on open-source foundations like Google’s Gemma and adopting efficient architectures like MoE, such projects lower the barrier to entry for using sophisticated AI models. This trend directly supports the growth of Web3 by enabling decentralized applications to integrate powerful AI functionalities without relying on centralized cloud providers. The focus on local execution enhances user privacy and data security, key tenets of decentralized technologies. Furthermore, the ongoing efforts to optimize models for consumer hardware, as seen with the E4B variant, will accelerate the adoption of AI tools across a wider range of devices, fostering innovation in areas like on-device processing, AI-powered gaming, and personalized digital assistants within the blockchain ecosystem.

Learn more at : decrypt.co