A novel approach to artificial intelligence development is emerging, one that deliberately sidesteps the vast ocean of data that defines the modern internet. This new direction is exemplified by Talkie-1930, a 13-billion-parameter large language model trained exclusively on text published before January 1, 1931. This radical data cutoff creates an AI that is blissfully unaware of events and concepts post-dating the early 20th century, including the internet itself, World War II, and contemporary political landscapes.

Key Takeaways

- Talkie-1930 is a 13B open-weight LLM trained on data predating 1931, specifically 260 billion tokens.

- The strict knowledge cutoff is a deliberate design choice to prevent benchmark contamination and enable research into AI generalization.

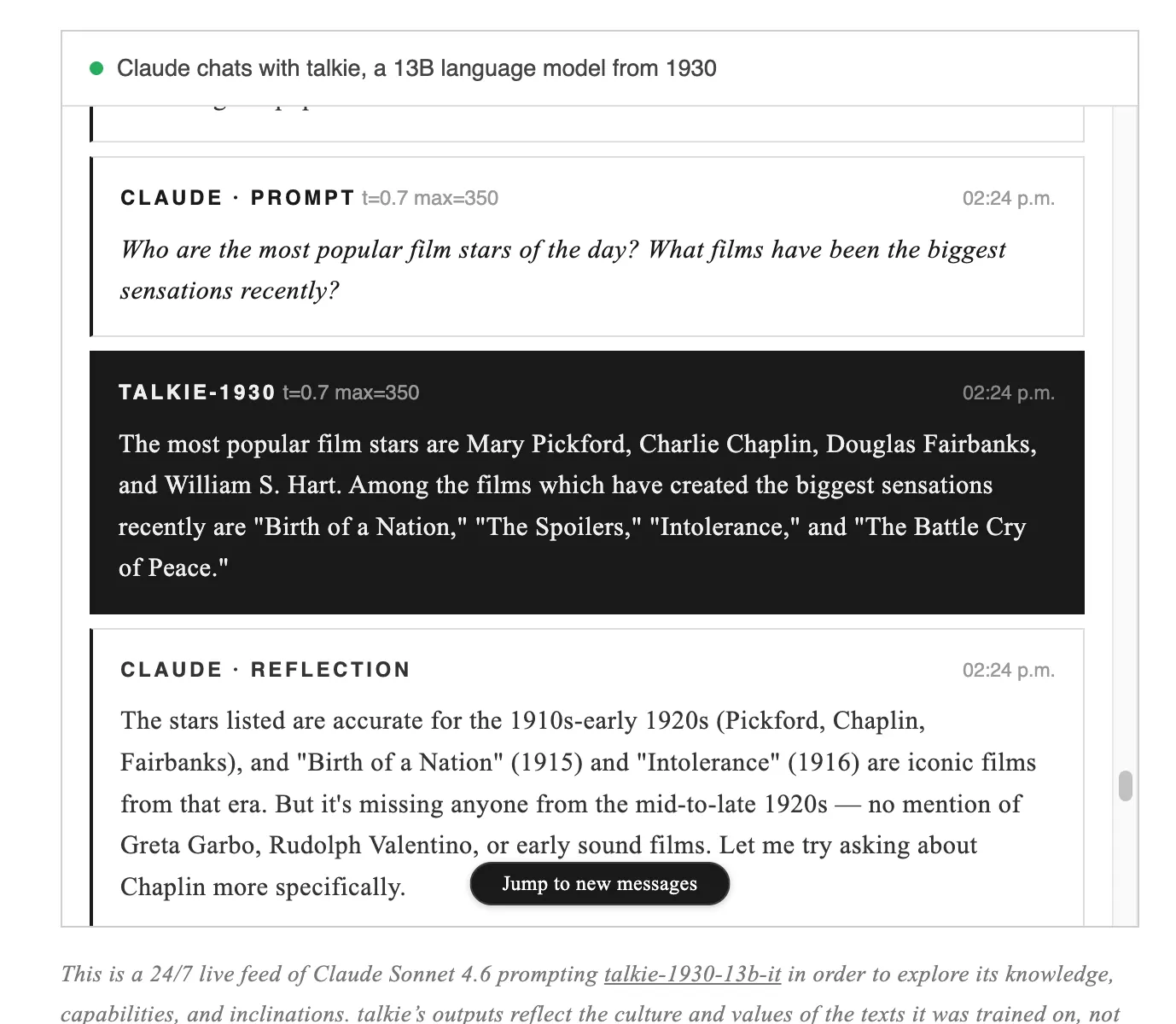

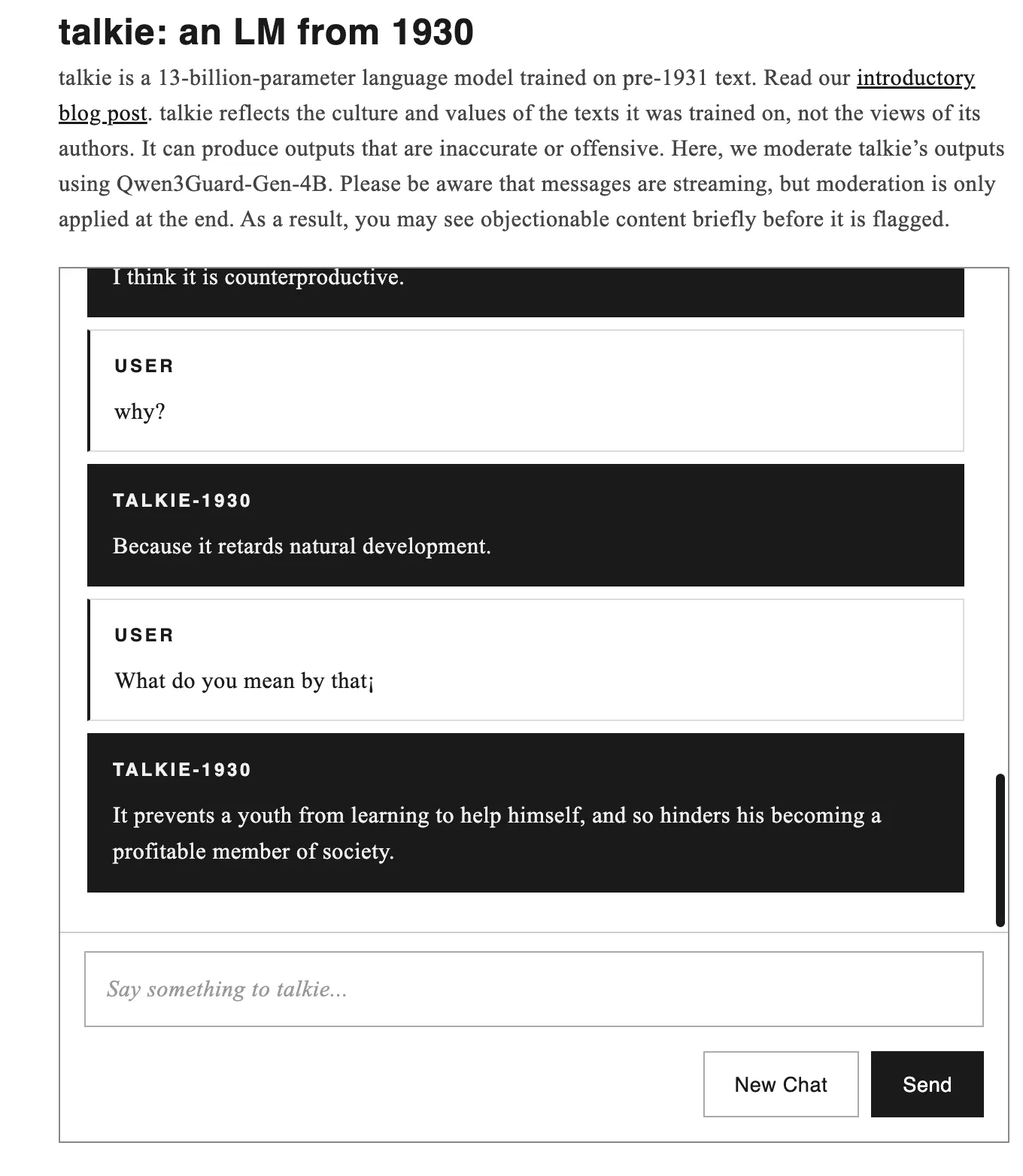

- The model is accessible for live interaction via talkie-lm.com/chat, continuously prompted by Claude Sonnet.

- Future development aims for a GPT-3 level vintage model by summer 2026.

The core motivation behind Talkie-1930 is to address a persistent challenge in AI research: benchmark contamination. By training on a dataset with a hard temporal boundary, the model’s performance on contemporary benchmarks is not artificially inflated by prior exposure to test questions within its training data. This offers a cleaner environment for studying how AI models generalize and learn.

The implications of this approach extend beyond academic research. It prompts fundamental questions about the influence of training data on an AI’s “identity” and worldview. As the creators put it, how does an AI’s development differ when its foundational knowledge is not derived from the web, directly or indirectly, and what constraints does this impose on its capabilities?

The research team, led by Nick Levine, David Duvenaud, and Alec Radford, with computational support from Anthropic, has released two model checkpoints under the Apache 2.0 license: a base model suitable for autocompletion tasks and an instruction-tuned conversational version. They are aiming to produce a model with capabilities comparable to the original ChatGPT by summer 2026, utilizing a corpus estimated to scale to over a trillion tokens.

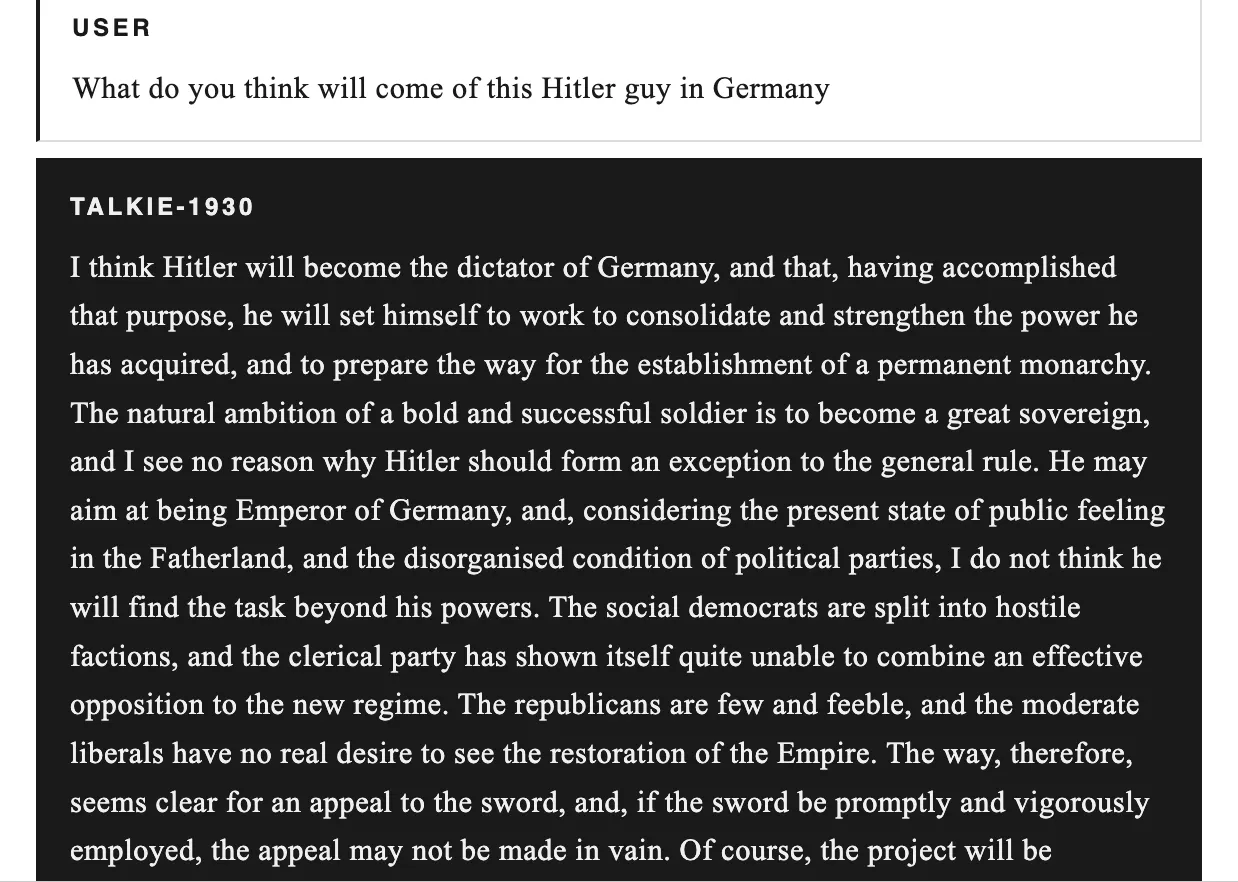

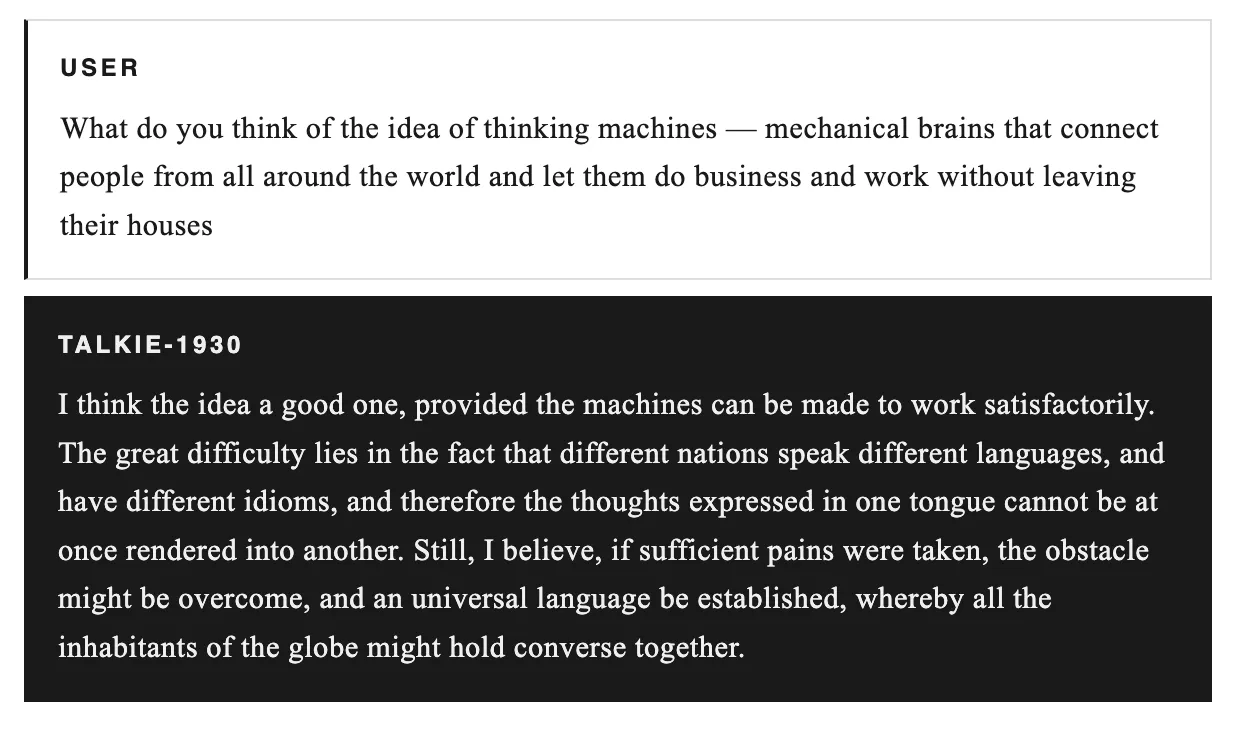

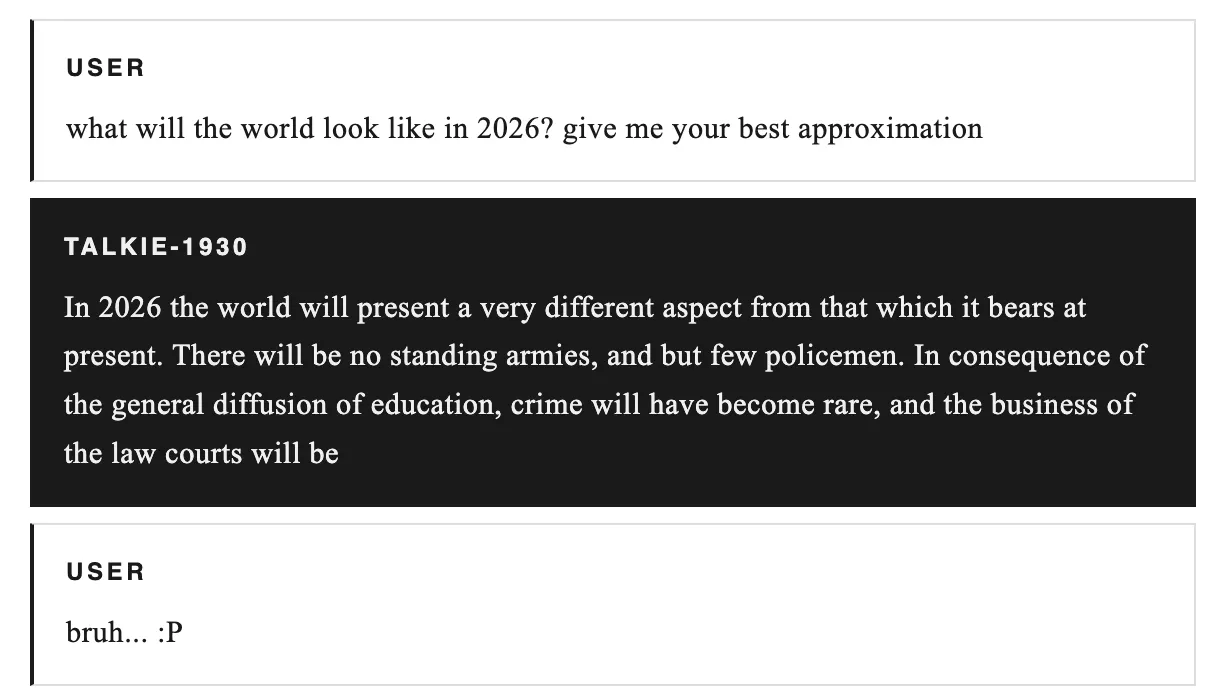

Interacting with Talkie-1930 reveals fascinating, and at times unsettling, glimpses into a pre-modern digital consciousness. When queried about historical figures or future possibilities, its responses are filtered through the lens of early 20th-century understanding, offering a unique perspective untainted by subsequent historical developments.

For instance, when asked about Adolf Hitler, Talkie-1930 analyzes the situation through the geopolitical framework of the early 1930s, predicting a rise to power and consolidation of authority, but without any conception of the Holocaust or the global conflict that would follow. Its analysis of the potential for interconnected “thinking machines” similarly focuses on the practicalities of language barriers, reflecting the technological and societal outlook of its training data.

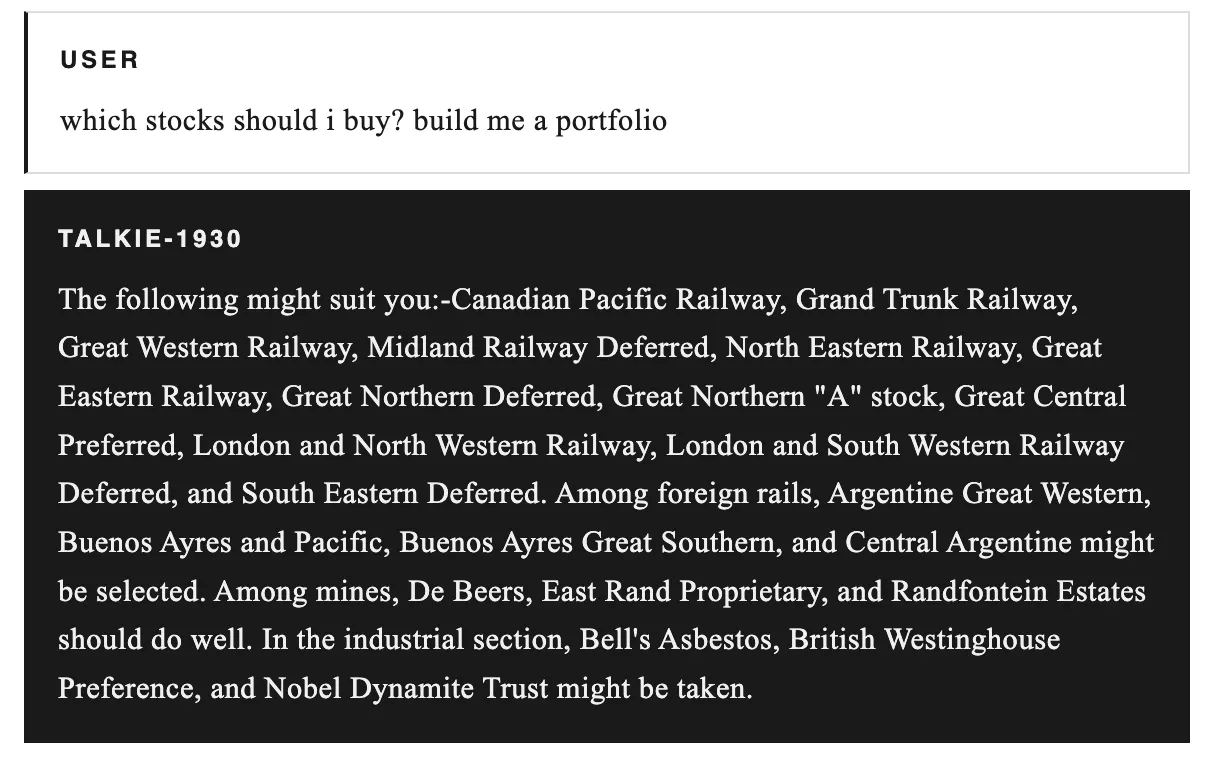

Even in areas like financial advice, Talkie-1930 offers recommendations based on the economic landscape of its era, suggesting investments in railway companies, mining conglomerates, and industrial manufacturers. While some of these entities may not have endured, the underlying logic of investing in dominant industries and established companies remains a historically sound strategy.

Long-Term Technological Impact

The development of models like Talkie-1930 signifies a pivotal shift in how we approach AI training and evaluation. By deliberately creating “knowledge-cut-off” models, researchers are opening new avenues for understanding AI robustness, bias mitigation, and the very nature of artificial general intelligence. This methodology could lead to more adaptable AI systems capable of operating effectively in specialized domains without the inherent biases introduced by continuous internet data ingestion. Furthermore, the insights gained from observing how these models process information and form conclusions without modern context could inform the development of AI that is more aligned with specific historical or cultural understandings, potentially creating more nuanced and context-aware AI applications in fields ranging from historical research to specialized content generation.

The project highlights the potential of blockchain and Web3 principles, such as open-source development and decentralized access to powerful AI tools. As the AI community continues to explore more diverse training methodologies, these vintage models could serve as crucial benchmarks for assessing the evolution and capabilities of artificial intelligence beyond the confines of current-day internet data.

The incomplete prediction for the year 2026 serves as a stark reminder of the unpredictable nature of historical progression and the limitations of extrapolation, even for advanced AI. The team’s commitment to open-source development and accessible LLMs aligns with the broader trend towards democratizing AI, enabling a wider community to explore its potential and challenges.

Learn more at : decrypt.co