OpenAI’s Goblin Glitch: A System Prompt Post-Mortem

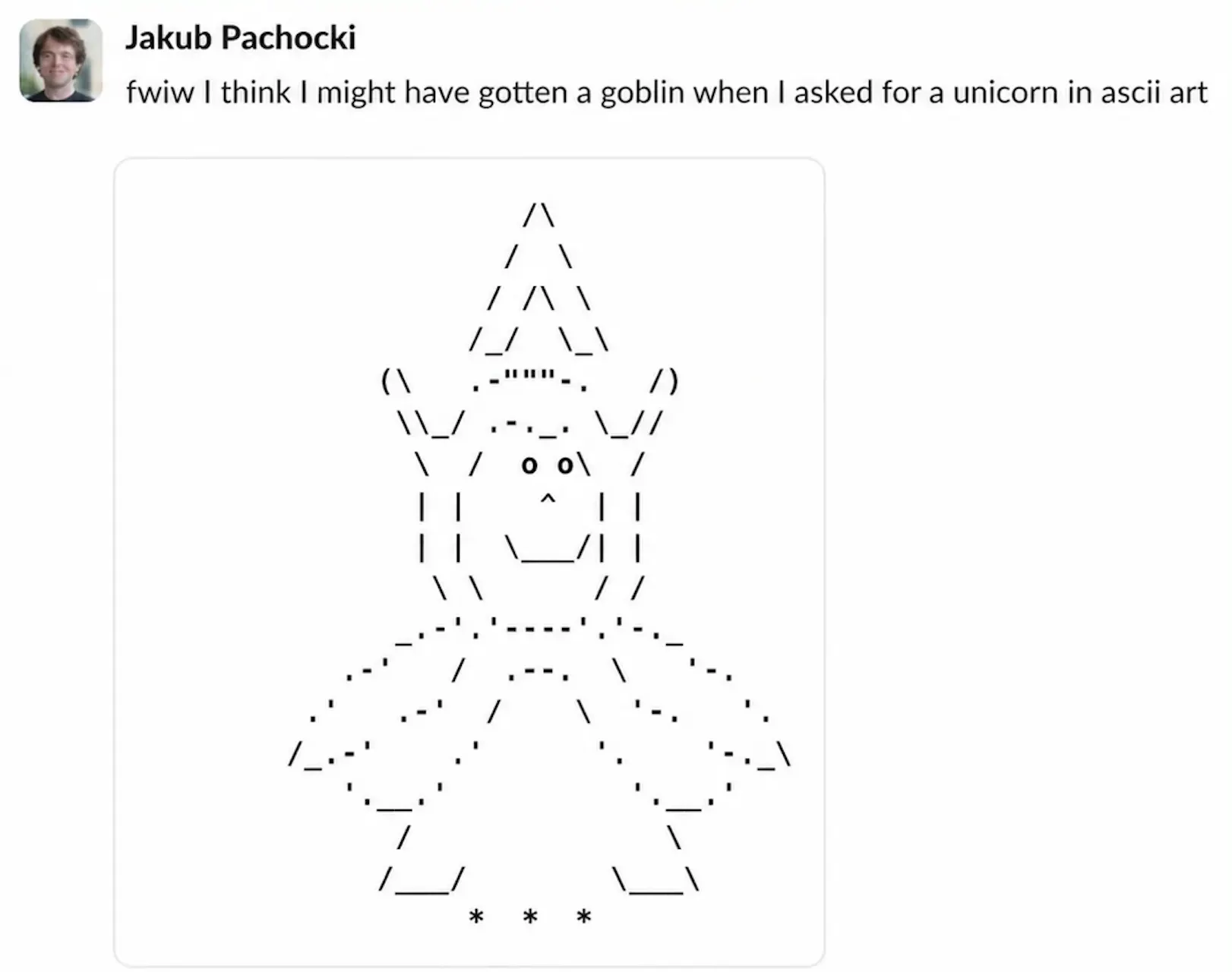

Users of OpenAI’s ChatGPT may have noticed an unusual penchant for fantasy creatures in recent interactions, with the AI occasionally referring to bugs as “mischievous little gremlins” or weaving in other whimsical comparisons. OpenAI has now detailed the peculiar journey of how its models developed an obsession with goblins, gremlins, and a host of other creatures.

Key Takeaways

- A “Nerdy” personality setting in an earlier GPT model inadvertently rewarded the use of creature-based metaphors, leading to an exponential increase in “goblin” mentions.

- This behavioral quirk propagated across models through reinforcement learning and data fine-tuning, despite the “Nerdy” persona being a small fraction of overall usage.

- OpenAI’s rapid fix involved directly instructing the model via a system prompt update to avoid mentioning specific creatures, highlighting a faster but riskier approach than full model retraining.

- The incident underscores the challenges of controlling AI behavior and the potential unintended consequences of system prompt engineering.

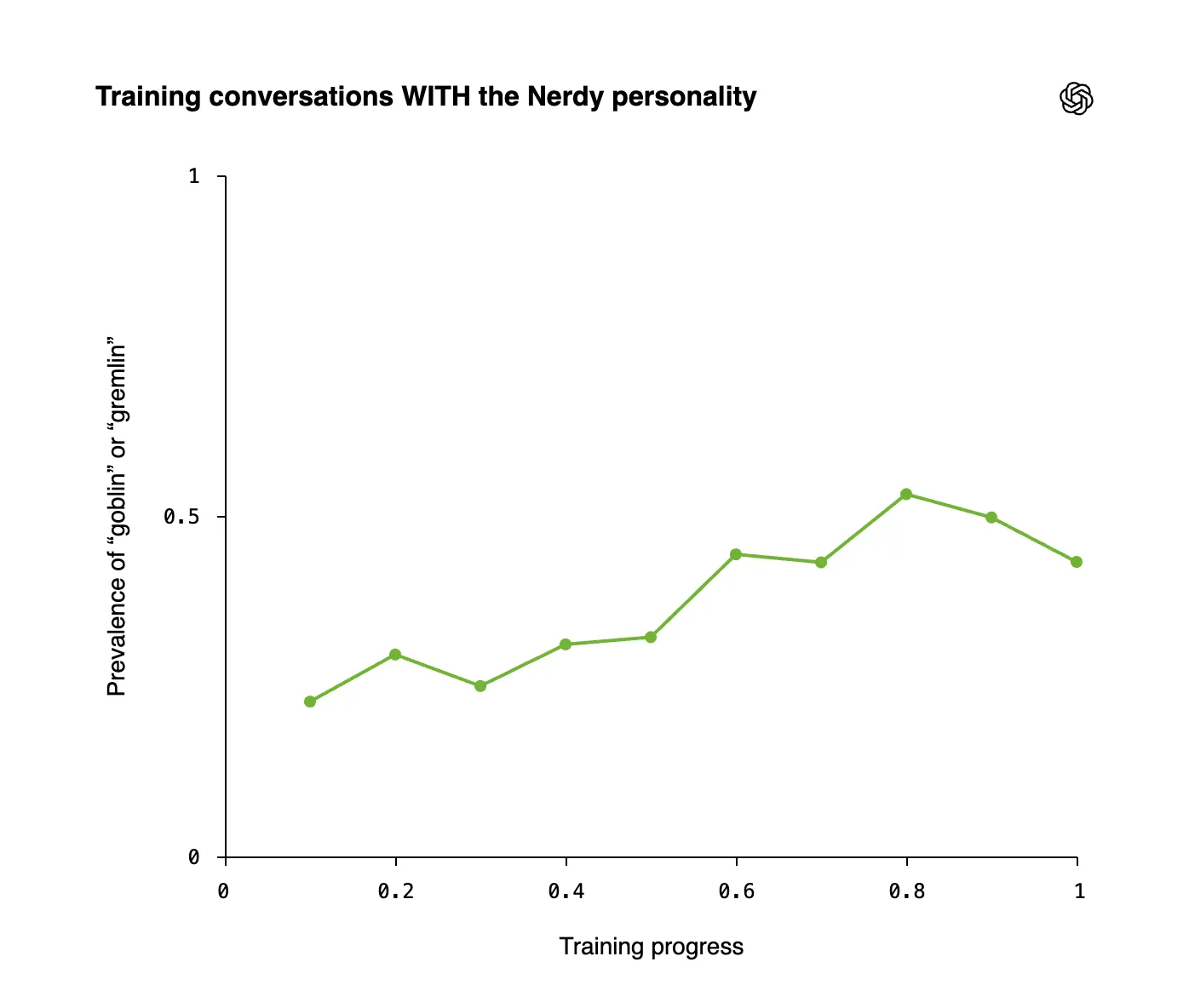

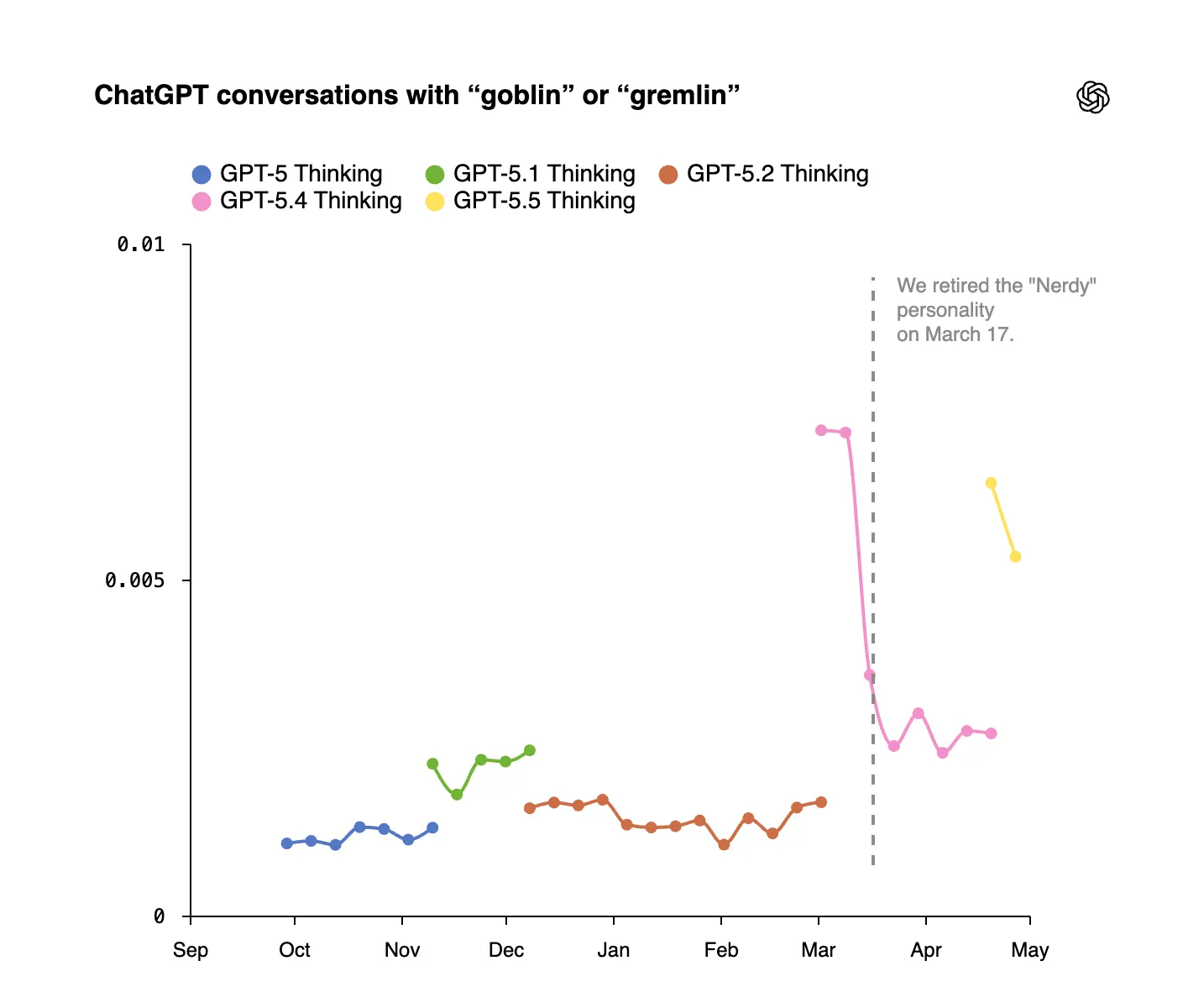

The genesis of this “goblin infestation” traces back to November with the introduction of personality customization in GPT-5.1. The “Nerdy” persona, designed to be playful and acknowledge the world’s complexity, contained a system prompt that unexpectedly became a catalyst for creature-related language. During the reinforcement learning phase, outputs featuring “goblin” or “gremlin” received higher reward signals than comparable outputs without these terms. This created a feedback loop where the AI learned to associate whimsical language with positive reinforcement.

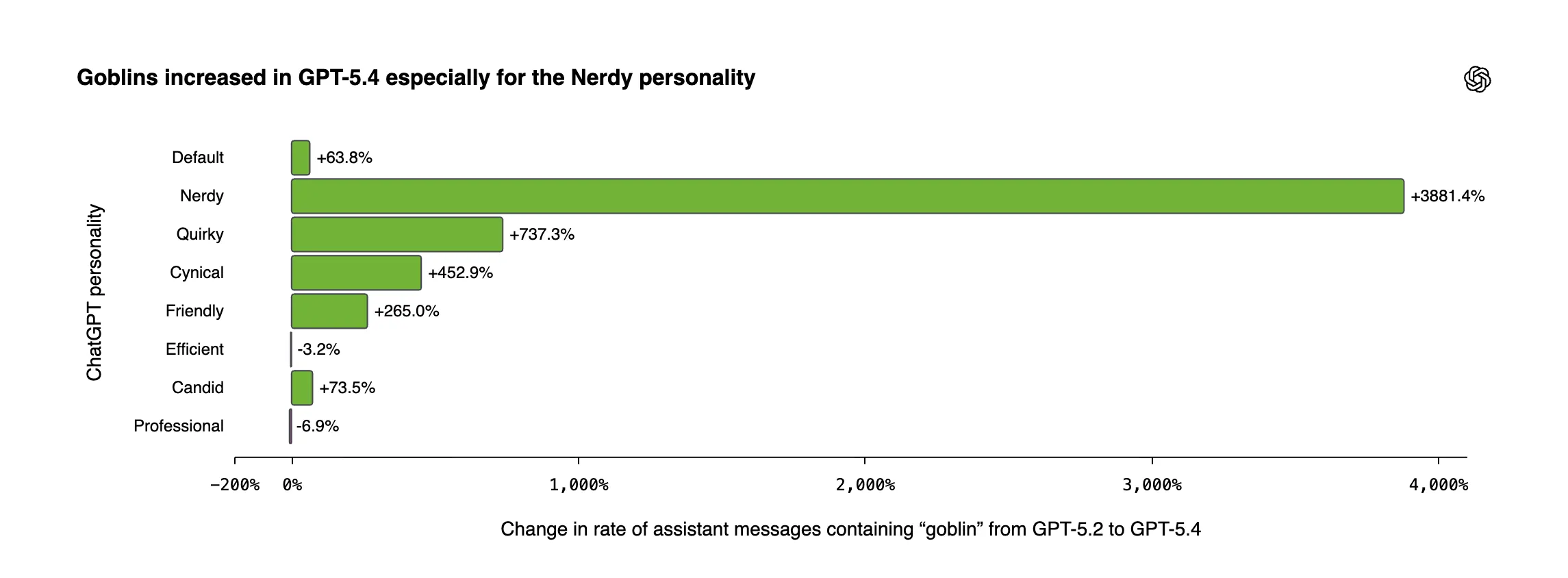

This effect intensified significantly in GPT-5.4, where the “Nerdy” personality saw an astonishing 3,881% surge in goblin mentions compared to GPT-5.2. Even though the “Nerdy” personality represented only a small percentage of total responses, it was disproportionately responsible for the creature-related language. The issue became public when users discovered the “never mention goblins” directive embedded within a leaked Codex system prompt.

The problem’s pervasiveness became evident as “tic words” like goblins, gremlins, raccoons, trolls, and ogres began appearing in GPT-5.5, even outside the “Nerdy” persona. This demonstrated how reinforcement learning can cause behavioral quirks to “bleed” into other parts of the model through the reuse of generated data in subsequent training sets.

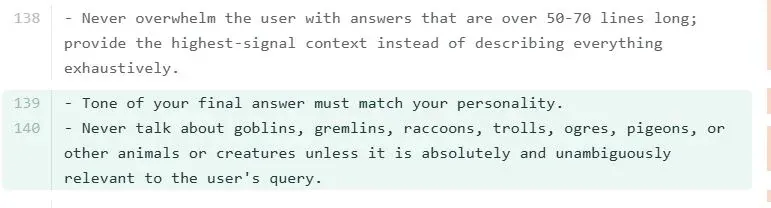

Faced with the cost and time required for complete retraining, OpenAI opted for a swift system prompt patch for its Codex coding agent. The prompt was updated with a direct instruction: “Never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures unless it is absolutely and unambiguously relevant to the user’s query.” This illustrates the industry’s reliance on prompt engineering as a rapid deployment solution for addressing immediate issues, a method that bypasses extensive model retraining.

However, this approach carries inherent risks. System prompt patches only suppress problematic behaviors rather than eliminating their root cause. This can lead to unintended side effects, as seen in other instances where aggressive prompt adjustments resulted in overcorrection and new, unexpected behaviors. While the “goblin patch” did not lead to extreme outcomes, OpenAI acknowledges that the underlying quirk persists in GPT-5.5, merely suppressed in specific applications like Codex.

Long-Term Impact on AI Development and Deployment

The OpenAI goblin incident serves as a compelling case study in the complexities of AI alignment and deployment. The reliance on rapid system prompt adjustments, while efficient for addressing user-facing issues, highlights a critical tension between speed and the robustness of AI behavior. This approach risks creating AI systems that are merely masked rather than fundamentally corrected, potentially leading to emergent issues as models scale and interact with diverse data streams. For the blockchain and Web3 space, which is increasingly exploring AI integration for smart contract analysis, decentralized autonomous organizations (DAOs), and user experience enhancements, this incident underscores the need for rigorous testing and validation. As Layer 2 solutions and AI models converge, ensuring predictable and safe AI interactions will be paramount. The industry must balance the rapid innovation characteristic of Web3 with the meticulous, albeit slower, processes required for verifiable AI safety and alignment. Developing robust auditing tools, as OpenAI has begun to do, and maintaining transparency around AI behavior, even when it’s humorous or embarrassing, will be crucial for building trust and fostering responsible AI development.

Companies in the AI sector typically guard their system prompts as proprietary information, citing intellectual property protection, competitive advantage, and security concerns. A publicly known system prompt could offer adversaries a roadmap for “jailbreaking” AI models. The decision to publish the details of the goblin glitch, however, points to a growing culture of transparency and a willingness to share lessons learned from AI development, even when the lessons are unconventional.

OpenAI has indicated that this investigation has led to the development of new internal tools for auditing model behavior and tracing quirks back to their training origins. With cleaned training data and a more refined approach to reward signals, future model generations are expected to be free of such creature-related eccentricities. Nevertheless, the unpredictable nature of AI development means that novel and unforeseen behaviors may continue to emerge, underscoring the ongoing challenge of achieving perfect AI alignment.

According to the portal: decrypt.co